Note

This page is still under development.

Open Platform for NFV (OPNFV) facilitates the development and evolution of NFV components across various open source ecosystems. Through system level integration, deployment and testing, OPNFV creates a reference NFV platform to accelerate the transformation of enterprise and service provider networks. Participation is open to anyone, whether you are an employee of a member company or just passionate about network transformation.

Network Functions Virtualization (NFV) is transforming the networking industry via software-defined infrastructures and open source is the proven method for developing software quickly for commercial products and services that can move markets. Open Platform for NFV (OPNFV) facilitates the development and evolution of NFV components across various open source ecosystems. Through system level integration, deployment and testing, OPNFV constructs a reference NFV platform to accelerate the transformation of enterprise and service provider networks. As an open source project, OPNFV is uniquely positioned to bring together the work of standards bodies, open source communities, and commercial suppliers to deliver a de facto NFV platform for the industry.

By integrating components from upstream projects, the community is able to conduct performance and use case-based testing on a variety of solutions to ensure the platform’s suitability for NFV use cases. OPNFV also works upstream with other open source communities to bring both contributions and learnings from its work directly to those communities in the form of blueprints, patches, and new code.

OPNFV initially focused on building NFV Infrastructure (NFVI) and Virtualised Infrastructure Management (VIM) by integrating components from upstream projects such as OpenDaylight, OpenStack, Ceph Storage, KVM, Open vSwitch, and Linux. More recently, OPNFV has extended its portfolio of forwarding solutions to include fd.io and ODP, is able to run on both Intel and ARM commercial and white-box hardware, and includes Management and Network Orchestration MANO components primarily for application composition and management in the Colorado release.

These capabilities, along with application programmable interfaces (APIs) to other NFV elements, form the basic infrastructure required for Virtualized Network Functions (VNF) and MANO components.

Concentrating on these components while also considering proposed projects on additional topics (such as the MANO components and applications themselves), OPNFV aims to enhance NFV services by increasing performance and power efficiency improving reliability, availability and serviceability, and delivering comprehensive platform instrumentation.

The OPNFV project addresses a number of aspects in the development of a consistent virtualisation platform including common hardware requirements, software architecture, MANO and applications.

OPNFV Platform Overview Diagram

To address these areas effectively, the OPNFV platform architecture can be decomposed into the following basic building blocks:

The infrastructure working group oversees such topics as lab management, workflow, definitions, metrics and tools for OPNFV infrastructure.

Fundamental to the WG is the Pharos Project which provides a set of defined lab infrastructures over a geographically and technically diverse federated global OPNFV lab.

Labs may instantiate bare-metal and virtual environments that are accessed remotely by the community and used for OPNFV platform and feature development, build, deploy and testing. No two labs are the same and the heterogeneity of the Pharos environment provides the ideal platform for establishing hardware and software abstractions providing well understood performance characteristics.

Community labs are hosted by OPNFV member companies on a voluntary basis. The Linux Foundation also hosts an OPNFV lab that provides centralized CI and other production resources which are linked to community labs. Future lab capabilities will include the ability easily automate deploy and test of any OPNFV install scenario in any lab environment as well as on a nested “lab as a service” virtual infrastructure.

The OPNFV software platform is comprised exclusively of open source implementations of platform component pieces. OPNFV is able to draw from the rich ecosystem of NFV related technologies available in open-source then integrate, test, measure and improve these components in conjunction with our source communities.

While the composition of the OPNFV software platform is highly complex and constituted of many projects and components, a subset of these projects gain the most attention from the OPNFV community to drive the development of new technologies and capabilities.

OPNFV derives it’s virtual infrastructure management from one of our largest upstream ecosystems OpenStack. OpenStack provides a complete reference cloud management system and associated technologies. While the OpenStack community sustains a broad set of projects, not all technologies are relevant in an NFV domain, the OPNFV community consumes a sub-set of OpenStack projects where the usage and composition may vary depending on the installer and scenario.

For details on the scenarios available in OPNFV and the specific composition of components refer to the OPNFV installation instruction: http://artifacts.opnfv.org/opnfvdocs/colorado/2.0/docs/installationprocedure/index.html

OPNFV currently uses Linux on all target machines, this can include Ubuntu, Centos or SUSE linux. The specific version of Linux used for any deployment is documented in the installation guide.

OPNFV, as an NFV focused project, has a significant investment on networking technologies and provides a broad variety of integrated open source reference solutions. The diversity of controllers able to be used in OPNFV is supported by a similarly diverse set of forwarding technologies.

There are many SDN controllers available today relevant to virtual environments where the OPNFV community supports and contributes to a number of these. The controllers being worked on by the community during this release of OPNFV include:

OPNFV extends Linux virtual networking capabilities by using virtual switching and routing components. The OPNFV community proactively engages with these source communities to address performance, scale and resiliency needs apparent in carrier networks.

A typical OPNFV deployment starts with three controller nodes running in a high availability configuration including control plane components from OpenStack, SDN, etc. and a minimum of two compute nodes for deployment of workloads (VNFs). A detailed description of the hardware requirements required to support the 5 node configuration can be found in pharos specification: http://artifacts.opnfv.org/pharos/colorado/2.0/docs/specification/index.html

In addition to the deployment on a highly available physical infrastructure, OPNFV can be deployed for development and lab purposes in a virtual environment. In this case each of the hosts is provided by a virtual machine and allows control and workload placement using nested virtualization.

The initial deployment is done using a staging server, referred to as the “jumphost”. This server-either physical or virtual-is first installed with the installation program that then installs OpenStack and other components on the controller nodes and compute nodes. See the OPNFV User Guide for more details.

The OPNFV community has set out to address the needs of virtualization in the carrier network and as such platform validation and measurements are a cornerstone to the iterative releases and objectives.

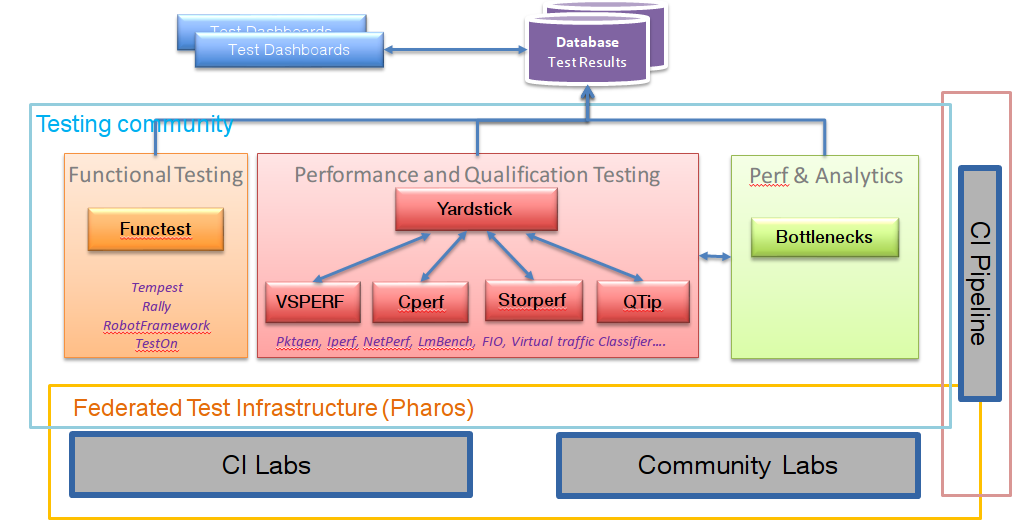

To simplify the complex task of feature, component and platform validation and characterization the testing community has established a fully automated method for addressing all key areas of platform validation. This required the integration of a variety of testing frameworks in our CI systems, real time and automated analysis of results, storage and publication of key facts for each run as shown in the following diagram.

The OPNFV community relies on its testing community to establish release criteria for each OPNFV release. Each release cycle the testing criteria become more stringent and better representative of our feature and resiliency requirements.

As each OPNFV release establishes a set of deployment scenarios to validate, the testing infrastructure and test suites need to accommodate these features and capabilities. It’s not only in the validation of the scenarios themselves where complexity increases, there are test cases that require multiple datacenters to execute when evaluating features, including multisite and distributed datacenter solutions.

The release criteria as established by the testing teams include passing a set of test cases derived from the functional testing project ‘functest,’ a set of test cases derived from our platform system and performance test project ‘yardstick,’ and a selection of test cases for feature capabilities derived from other test projects such as bottlenecks, vsperf, cperf and storperf. The scenario needs to be able to be deployed, pass these tests, and be removed from the infrastructure iteratively (no less that 4 times) in order to fulfill the release criteria.

Functest provides a functional testing framework incorporating a number of test suites and test cases that test and verify OPNFV platform functionality. The scope of Functest and relevant test cases can be found in its user guide.

Functest provides both feature project and component test suite integration, leveraging OpenStack and SDN controllers testing frameworks to verify the key components of the OPNFV platform are running successfully.

Yardstick is a testing project for verifying the infrastructure compliance when running VNF applications. Yardstick benchmarks a number of characteristics and performance vectors on the infrastructure making it a valuable pre-deployment NFVI testing tools.

Yardstick provides a flexible testing framework for launching other OPNFV testing projects.

There are two types of test cases in Yardstick:

The OPNFV community is developing a set of test suites intended to evaluate a set of reference behaviors and capabilities for NFV systems developed externally from the OPNFV ecosystem to evaluate and measure their ability to provide the features and capabilities developed in the OPNFV ecosystem.

The Dovetail project will provide a test framework and methodology able to be used on any NFV platform, including an agreed set of test cases establishing an evaluation criteria for exercising an OPNFV compatible system. The Dovetail project has begun establishing the test framework and will provide a preliminary methodology for the Colorado release. Work will continue to develop these test cases to establish a stand alone compliance evaluation solution in future releases.

Besides the test suites and cases for release verification, additional testing is performed to validate specific features or characteristics of the OPNFV platform. These testing framework and test cases may include some specific needs; such as extended measurements, additional testing stimuli, or tests simulating environmental disturbances or failures.

These additional testing activities provide a more complete evaluation of the OPNFV platform. Some of the projects focused on these testing areas include:

VSPERF provides a generic and architecture agnostic vSwitch testing framework and associated tests. This serves as a basis for validating the suitability of different vSwitch implementations and deployments.

Bottlenecks provides a framework to find system limitations and bottlenecks, providing root cause isolation capabilities to facilitate system evaluation.

The following document provides an overview of the instructions required for the installation of the Colorado release of OPNFV.

The Colorado release can be installed using a variety of technologies provided by the integration projects participating in OPNFV: Apex, Compass4Nfv, Fuel and JOID. Each installer provides the ability to install a common OPNFV platform as well as integrating additional features delivered through a variety of scenarios by the OPNFV community.

The OPNFV platform is comprised of a variety of upstream components that may be deployed on your physical infrastructure. A composition of components, tools and configurations is identified in OPNFV as a deployment scenario. The various OPNFV scenarios provide unique features and capabilities that you may want to leverage, it is important to understand your required target platform capabilities before installing and configuring your target scenario.

An OPNFV installation requires either a physical, or virtual, infrastructure environment as defined in the Pharos specification. When configuring a physical infrastructure it is strongly advised to follow the Pharos configuration guidelines.

OPNFV scenarios are designed to host virtualised network functions (VNF’s) in a variety of deployment architectures and locations. Each scenario provides specific capabilities and/or components aimed to solve specific problems for the deployment of VNF’s. A scenario may, for instance, include components such as OpenStack, OpenDaylight, OVS, KVM etc... where each scenario will include different source components or configurations.

To learn more about the scenarios supported in the Colorado release refer to the scenario description documents provided:

Detailed step by step instructions for working with an installation toolchain and installing the required scenario are provided by each installation project. The four projects providing installation support for the OPNFV Colorado release are; Apex, Compass4nfv, Fuel and Joid.

The instructions for each toolchain can be found in these links:

If you have elected to install the OPNFV platform using the deployment toolchain provided by OPNFV your system will have been validated once the installation is completed. The basic deployment validation only addresses a small component of the capability provided in the platform and you may desire to execute more exhaustive tests. Some investigation is required to select the right test suites to run on your platform from the available projects and suites.

Many of the OPNFV test project provide user-guide documentation and installation instructions as provided below:

The following patches were applied to fix security issues discovered in opnfv projects, during the c-release cycle.

OPNFV is a collaborative project aimed at providing a variety of virtualization deployments intended to host applications serving the networking and carrier industry. This document provides guidance and instructions for using platform features designed to support these applications, made available in the OPNFV Colorado release.

This document is not intended to replace or replicate documentation from other open source projects such as OpenStack or OpenDaylight, rather highlight the features and capabilities delivered through the OPNFV project.

OPNFV provides a suite of scenarios, infrastructure depoyment options, which are able to be installed to host virtualized network functions (VNFs). This guide intends to help users of the platform leverage the features and capabilities delivered by the OPNFV project in support of these applications.

OPNFV Continuous Integration builds, deploys and tests combinations of virtual infrastructure components in what are defined as scenarios. A scenario may include components such as OpenStack, OpenDaylight, OVS, KVM etc. where each scenario will include different source components or configurations. Scenarios are designed to enable specific features and capabilities in the platform that can be leveraged by the OPNFV user community.

The following links outline the feature deliveries from the participant OPNFV projects in the Colorado release. Each of the participating projects provides detailed descriptions about the delivered features. Including use cases, implementation and configuration specifics on a per OPNFV project basis.

The following are Configuration Guides and User Guides and assume that the reader has already some information about a given projects specifics and deliverables. These guides are intended to be used following the installation of a given OPNFV installer to allow a user to deploy and implement feature delivered by OPNFV.

If you are unsure about the specifics of a given project, please refer to the OPNFV projects home page, found on http://wiki.opnfv.org, for specific details.

You can find project specific usage and configuration information below:

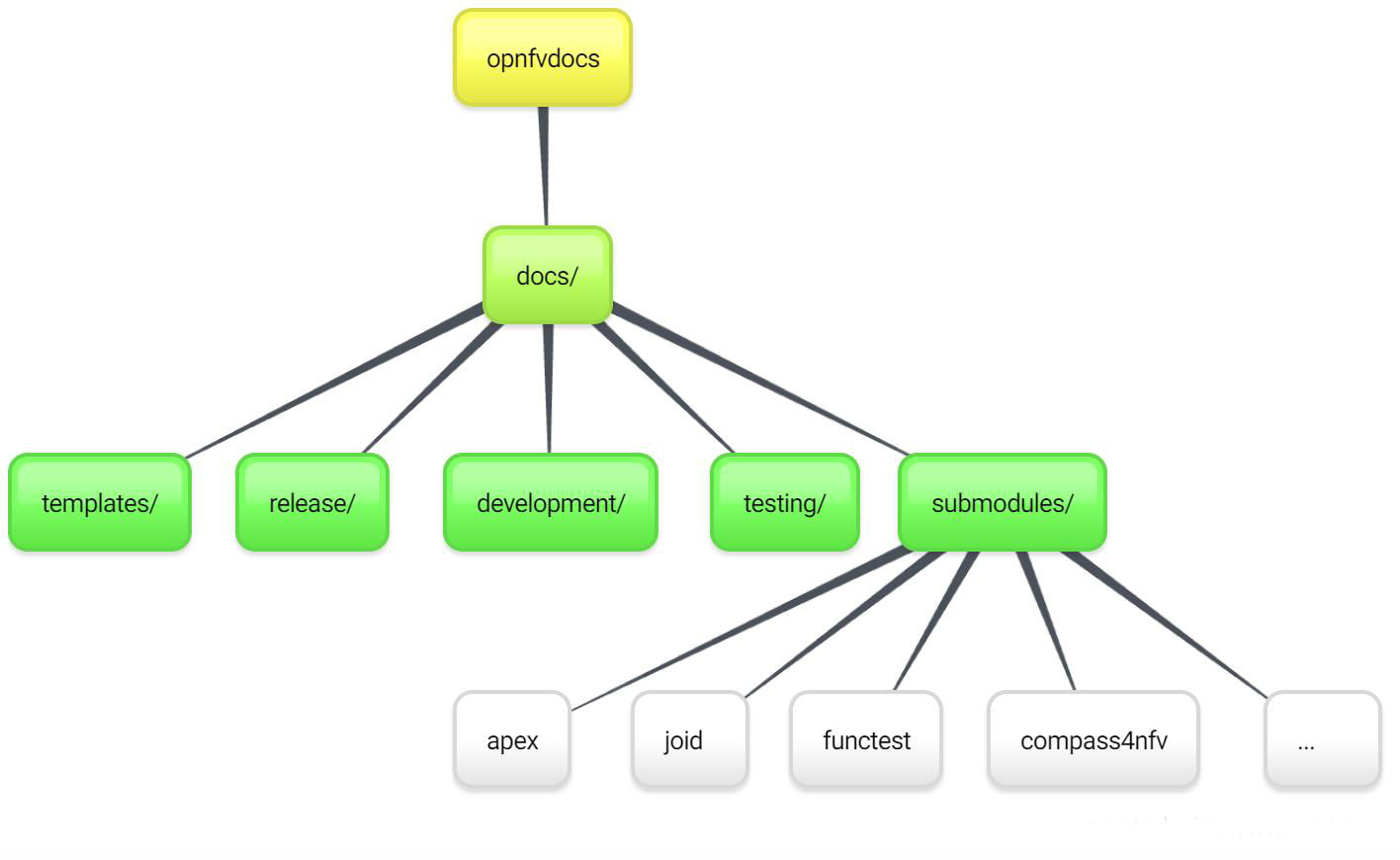

This page intends to cover the documentation handling for OPNFV. OPNFV projects are expected to create a variety of document types, according to the nature of the project. Some of these are common to projects that develop/integrate features into the OPNFV platform, e.g. Installation Instructions and User/Configurations Guides. Other document types may be project-specific.

OPNFV documentation is automated and integrated into our git & gerrit toolchains.

We use RST document templates in our repositories and automatically render to HTML and PDF versions of the documents in our artifact store, our WiKi is also able to integrate these rendered documents directly allowing projects to use the revision controlled documentation process for project information, content and deliverables. Read this page which elaborates on how documentation is to be included within opnfvdocs.

All contributions to the OPNFV project are done in accordance with the OPNFV licensing requirements. Documentation in OPNFV is contributed in accordance with the Creative Commons 4.0 licence. All documentation files need to be licensed using the creative commons licence. The following example may be applied in the first lines of all contributed RST files:

.. This work is licensed under a Creative Commons Attribution 4.0 International License.

.. http://creativecommons.org/licenses/by/4.0

.. (c) <optionally add copywriters name>

These lines will not be rendered in the html and pdf files.

All documentation for your project should be structured and stored in the <repo>/docs/ directory. The documentation toolchain will look in these directories and be triggered on events in these directories when generating documents.

A general structure is proposed for storing and handling documents that are common across many projects but also for documents that may be project specific. The documentation is divided into three areas Release, Development and Testing. Templates for these areas can be found under opnfvdocs/docs/templates/.

Project teams are encouraged to use templates provided by the opnfvdocs project to ensure that there is consistency across the community. Following representation shows the expected structure:

docs/

├── development

│ ├── design

│ ├── overview

│ └── requirements

├── release

│ ├── configguide

│ ├── installation

│ ├── release-notes

│ ├── scenarios

│ │ └── scenario.name

│ └── userguide

└── testing

Release documentation is the set of documents that are published for each OPNFV release. These documents are created and developed following the OPNFV release process and milestones and should reflect the content of the OPNFV release.

These documents have a master index.rst file in the <opnfvdocs> repository and extract content from other repositories. To provide content into these documents place your <content>.rst files in a directory in your repository that matches the master document and add a reference to that file in the correct place in the corresponding index.rst file in opnfvdocs/docs/release/.

Platform Overview: opnfvdocs/docs/release/overview

Installation Instruction: <repo>/docs/release/installation

User Guide: <repo>/docs/release/userguide

Configuration Guide: <repo>/docs/release/configguide

Release Notes: <repo>/docs/release/release-notes

Structure TBD together with test projects

Documentation not aimed for any specific release such as design documentation, project overview or requirement documentation can be stored under /docs/development. Links to generated documents will be dislayed under Development Documentaiton section on docs.opnfv.org. You are encouraged to establish the following basic structure for your project as needed:

Requirement Documentation: <repo>/docs/development/requirements/

Design Documentation: <repo>/docs/development/design

Project overview: <repo>/docs/development/overview

Add your documentation to your repository in the folder structure and according to the templates listed above. The documentation templates you will require are available in opnfvdocs/docs/templates/ repository, you should copy the relevant templates to your <repo>/docs/ directory in your repository. For instance if you want to document userguide, then your steps shall be as follows:

git clone ssh://<your_id>@gerrit.opnfv.org:29418/opnfvdocs.git

cp -p opnfvdocs/docs/userguide/* <my_repo>/docs/userguide/

You should then add the relevant information to the template that will explain the documentation. When you are done writing, you can commit the documentation to the project repository.

git add .

git commit --signoff --all

git review

opnfvdocs/docs/submodule/ as follows:

To include your project specific documentation in the composite documentation, first identify where your project documentation should be included. Say your project userguide should figure in the ‘OPNFV Userguide’, then:

vim opnfvdocs/docs/release/userguide.introduction.rst

This opens the text editor. Identify where you want to add the userguide. If the userguide is to be added to the toctree, simply include the path to it, example:

.. toctree::

:maxdepth: 1

submodules/functest/docs/userguide/index

submodules/bottlenecks/docs/userguide/index

submodules/yardstick/docs/userguide/index

<submodules/path-to-your-file>

It’s pretty common to want to reference another location in the OPNFV documentation and it’s pretty easy to do with reStructuredText. This is a quick primer, more information is in the Sphinx section on Cross-referencing arbitrary locations.

Within a single document, you can reference another section simply by:

This is a reference to `The title of a section`_

Assuming that somewhere else in the same file there a is a section title something like:

The title of a section

^^^^^^^^^^^^^^^^^^^^^^

It’s typically better to use :ref: syntax and labels to provide

links as they work across files and are resilient to sections being

renamed. First, you need to create a label something like:

.. _a-label:

The title of a section

^^^^^^^^^^^^^^^^^^^^^^

Note

The underscore (_) before the label is required.

Then you can reference the section anywhere by simply doing:

This is a reference to :ref:`a-label`

or:

This is a reference to :ref:`a section I really liked <a-label>`

Note

When using :ref:-style links, you don’t need a trailing

underscore (_).

Because the labels have to be unique, it usually makes sense to prefix

the labels with the project name to help share the label space, e.g.,

sfc-user-guide instead of just user-guide.

Once you have made these changes you need to push the patch back to the opnfvdocs team for review and integration.

git add .

git commit --signoff --all

git review

Be sure to add the project leader of the opnfvdocs project as a reviewer of the change you just pushed in gerrit.

It is recommended that all rst content is validated by doc8 standards. To validate your rst files using doc8, install doc8.

sudo pip install doc8

doc8 can now be used to check the rst files. Execute as,

doc8 --ignore D000,D001 <file>

To build whole documentation under opnfvdocs/, follow these steps:

Install virtual environment.

sudo pip install virtualenv

cd /local/repo/path/to/project

Download the OPNFVDOCS repository.

git clone https://gerrit.opnfv.org/gerrit/opnfvdocs

Change directory to opnfvdocs & install requirements.

cd opnfvdocs

sudo pip install -r etc/requirements.txt

Update submodules, build documentation using tox & then open using any browser.

cd opnfvdocs

git submodule update --init

tox -edocs

firefox docs/_build/html/index.html

Note

Make sure to run tox -edocs and not just tox.

To test how the documentation renders in HTML, follow these steps:

Install virtual environment.

sudo pip install virtualenv

cd /local/repo/path/to/project

Download the opnfvdocs repository.

git clone https://gerrit.opnfv.org/gerrit/opnfvdocs

Change directory to opnfvdocs & install requirements.

cd opnfvdocs

sudo pip install -r etc/requirements.txt

Move the conf.py file to your project folder where RST files have been kept:

mv opnfvdocs/docs/conf.py <path-to-your-folder>/

Move the static files to your project folder:

mv opnfvdocs/_static/ <path-to-your-folder>/

Build the documentation from within your project folder:

sphinx-build -b html <path-to-your-folder> <path-to-output-folder>

Your documentation shall be built as HTML inside the specified output folder directory.

Note

Be sure to remove the conf.py, the static/ files and the output folder from the <project>/docs/. This is for testing only. Only commit the rst files and related content.