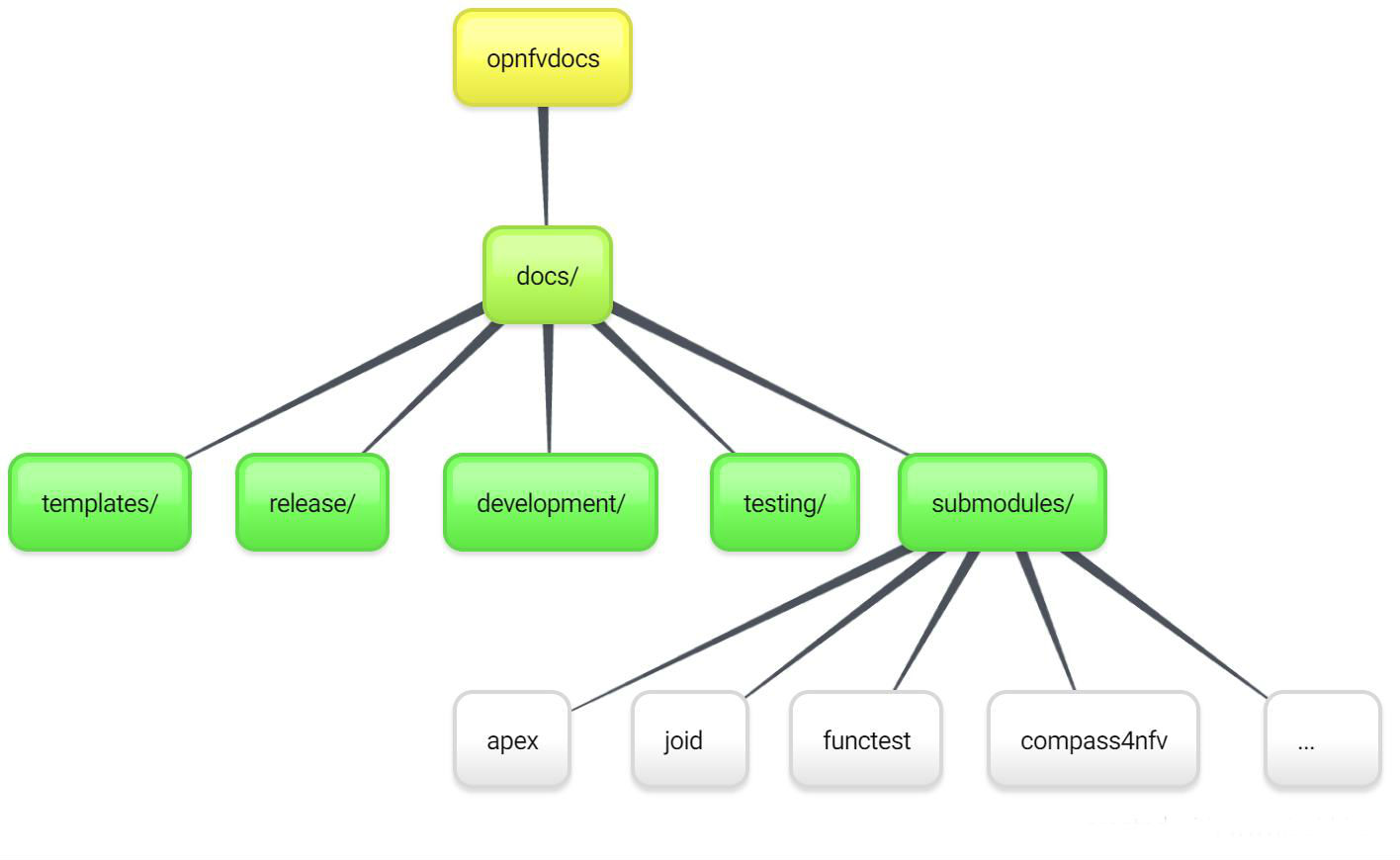

Open Platform for NFV (OPNFV) facilitates the development and evolution of NFV components across various open source ecosystems. Through system level integration, deployment and testing, OPNFV creates a reference NFV platform to accelerate the transformation of enterprise and service provider networks. Participation is open to anyone, whether you are an employee of a member company or just passionate about network transformation.

Network Functions Virtualization (NFV) is transforming the networking industry via software-defined infrastructures and open source is the proven method for quickly developing software for commercial products and services that can move markets. Open Platform for NFV (OPNFV) facilitates the development and evolution of NFV components across various open source ecosystems. Through system level integration, deployment and testing, OPNFV constructs a reference NFV platform to accelerate the transformation of enterprise and service provider networks. As an open source project, OPNFV is uniquely positioned to bring together the work of standards bodies, open source communities, service providers and commercial suppliers to deliver a de facto NFV platform for the industry.

By integrating components from upstream projects, the community is able to conduct performance and use case-based testing on a variety of solutions to ensure the platform’s suitability for NFV use cases. OPNFV also works upstream with other open source communities to bring contributions and learnings from its work directly to those communities in the form of blueprints, patches, bugs, and new code.

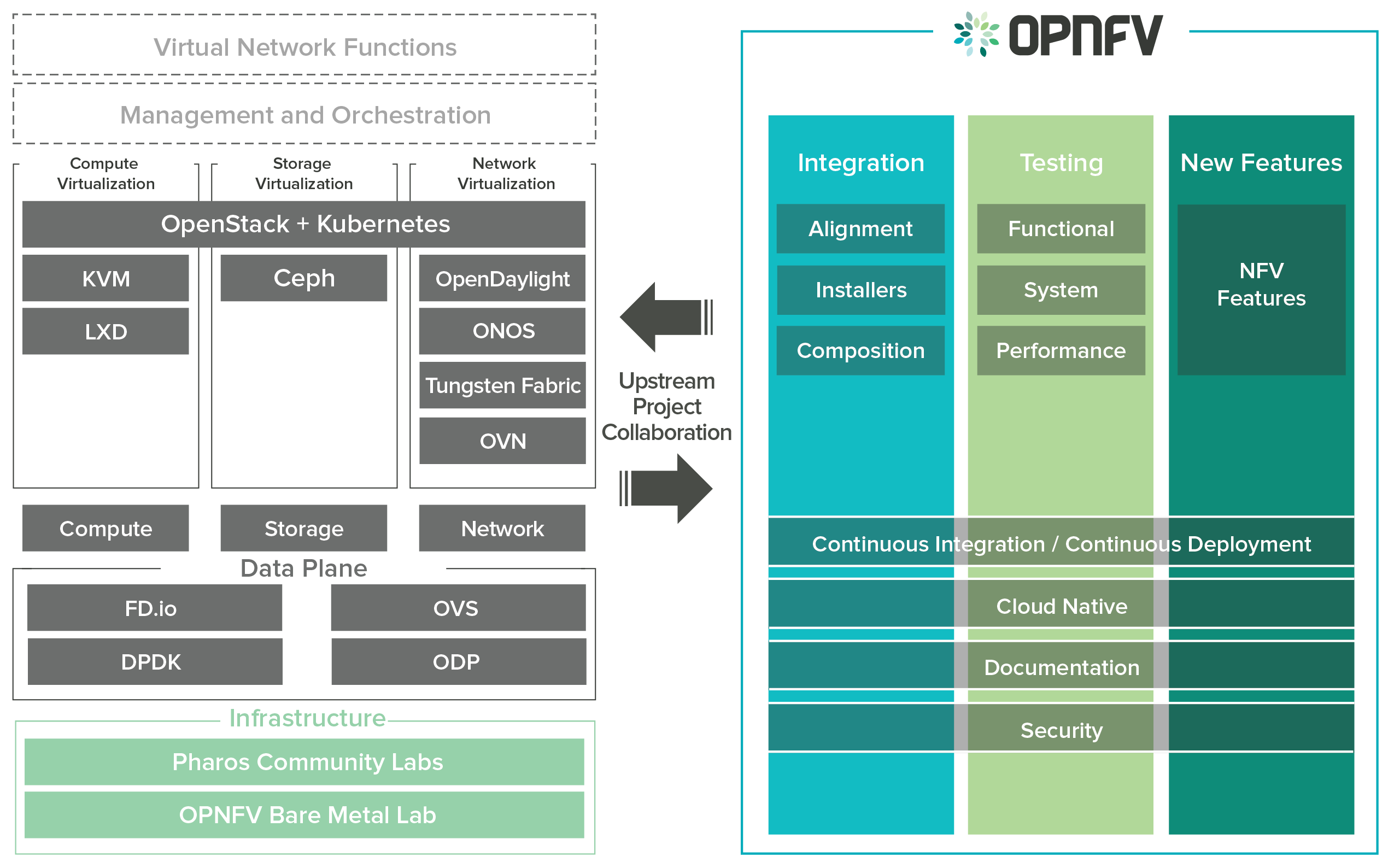

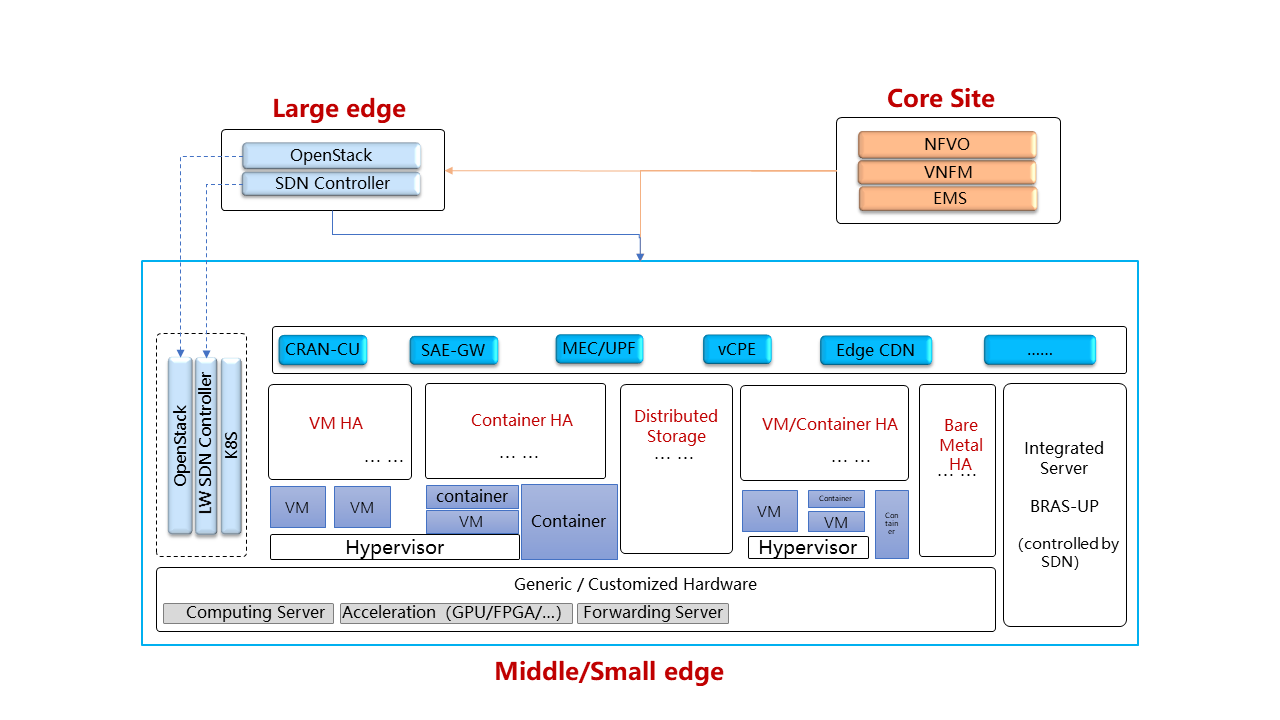

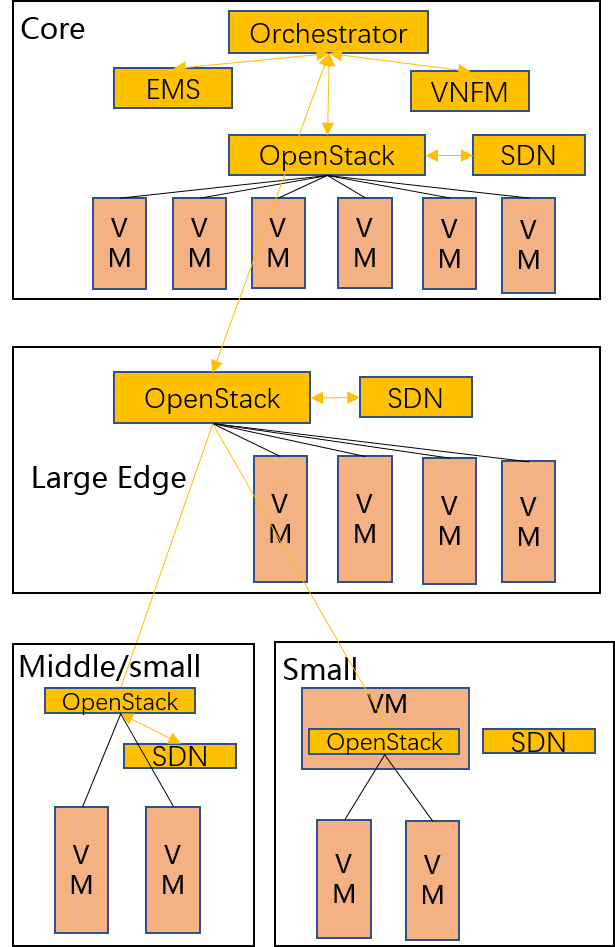

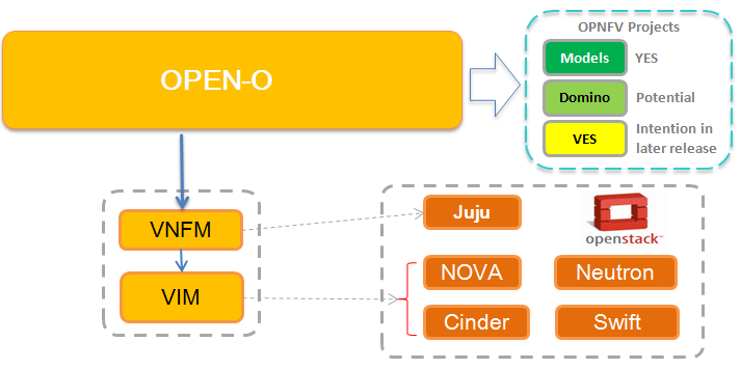

OPNFV focuses on building NFV Infrastructure (NFVI) and Virtualised Infrastructure Management (VIM) by integrating components from upstream projects such as OpenDaylight, ONOS, Tungsen Fabric, OVN, OpenStack, Kubernetes, Ceph Storage, KVM, Open vSwitch, and Linux. More recently, OPNFV has extended its portfolio of forwarding solutions to include DPDK, fd.io and ODP, is able to run on both Intel and ARM commercial and white-box hardware, support VM, Container and BareMetal workloads, and includes Management and Network Orchestration MANO components primarily for application composition and management in the Fraser release.

These capabilities, along with application programmable interfaces (APIs) to other NFV elements, form the basic infrastructure required for Virtualized Network Functions (VNF) and MANO components.

Concentrating on these components while also considering proposed projects on additional topics (such as the MANO components and applications themselves), OPNFV aims to enhance NFV services by increasing performance and power efficiency improving reliability, availability and serviceability, and delivering comprehensive platform instrumentation.

The OPNFV project addresses a number of aspects in the development of a consistent virtualisation platform including common hardware requirements, software architecture, MANO and applications.

OPNFV Platform Overview Diagram

To address these areas effectively, the OPNFV platform architecture can be decomposed into the following basic building blocks:

The infrastructure working group oversees such topics as lab management, workflow, definitions, metrics and tools for OPNFV infrastructure.

Fundamental to the WG is the Pharos Specification which provides a set of defined lab infrastructures over a geographically and technically diverse federated global OPNFV lab.

Labs may instantiate bare-metal and virtual environments that are accessed remotely by the community and used for OPNFV platform and feature development, build, deploy and testing. No two labs are the same and the heterogeneity of the Pharos environment provides the ideal platform for establishing hardware and software abstractions providing well understood performance characteristics.

Community labs are hosted by OPNFV member companies on a voluntary basis. The Linux Foundation also hosts an OPNFV lab that provides centralized CI and other production resources which are linked to community labs.

The Lab-as-a-service (LaaS) offering provides developers to readily access NFV infrastructure on demand. Ongoing lab capabilities will include the ability easily automate deploy and test of any OPNFV install scenario in any lab environment using a concept called “Dynamic CI”.

The OPNFV software platform is comprised exclusively of open source implementations of platform component pieces. OPNFV is able to draw from the rich ecosystem of NFV related technologies available in open source communities, and then integrate, test, measure and improve these components in conjunction with our upstream communities.

OPNFV derives it’s virtual infrastructure management from one of our largest upstream ecosystems OpenStack. OpenStack provides a complete reference cloud management system and associated technologies. While the OpenStack community sustains a broad set of projects, not all technologies are relevant in the NFV domain, the OPNFV community consumes a sub-set of OpenStack projects and the usage and composition may vary depending on the installer and scenario.

For details on the scenarios available in OPNFV and the specific composition of components refer to the OPNFV User Guide & Configuration Guide.

OPNFV now also has initial support for containerized VNFs.

OPNFV currently uses Linux on all target machines, this can include Ubuntu, Centos or SUSE Linux. The specific version of Linux used for any deployment is documented in the installation guide.

OPNFV, as an NFV focused project, has a significant investment on networking technologies and provides a broad variety of integrated open source reference solutions. The diversity of controllers able to be used in OPNFV is supported by a similarly diverse set of forwarding technologies.

There are many SDN controllers available today relevant to virtual environments where the OPNFV community supports and contributes to a number of these. The controllers being worked on by the community during this release of OPNFV include:

OPNFV extends Linux virtual networking capabilities by using virtual switching and routing components. The OPNFV community proactively engages with the following open source communities to address performance, scale and resiliency needs apparent in carrier networks.

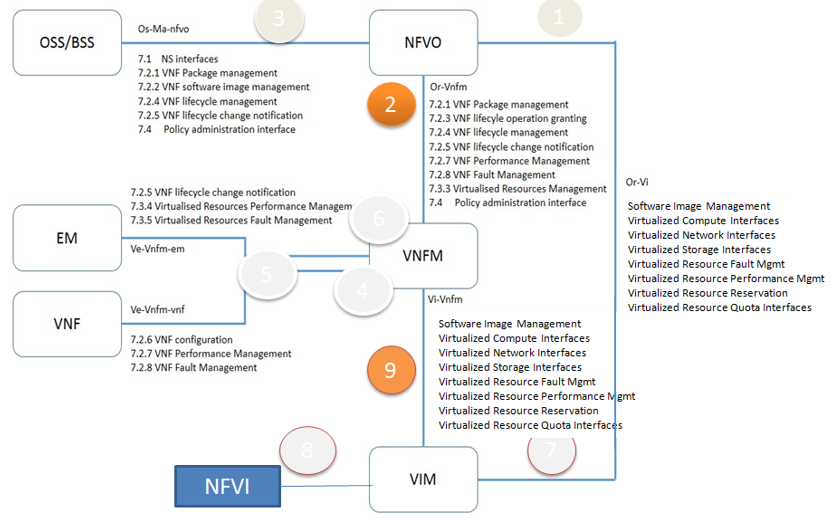

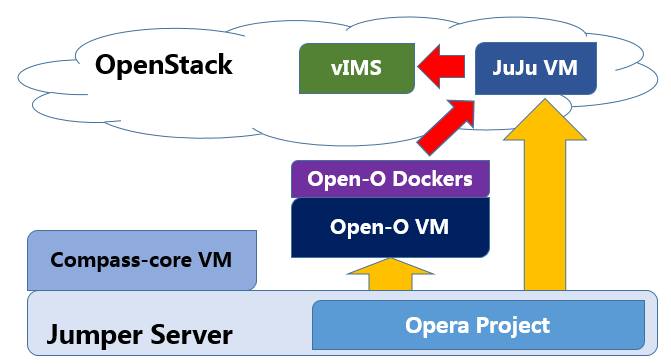

OPNFV integrates open source MANO projects for NFV orchestration and VNF management. New MANO projects are constantly being added.

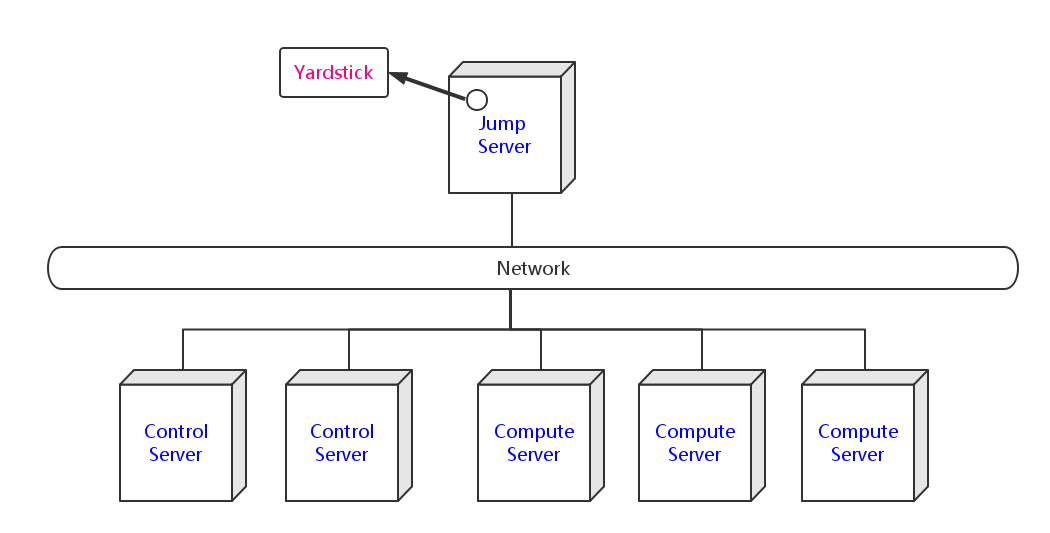

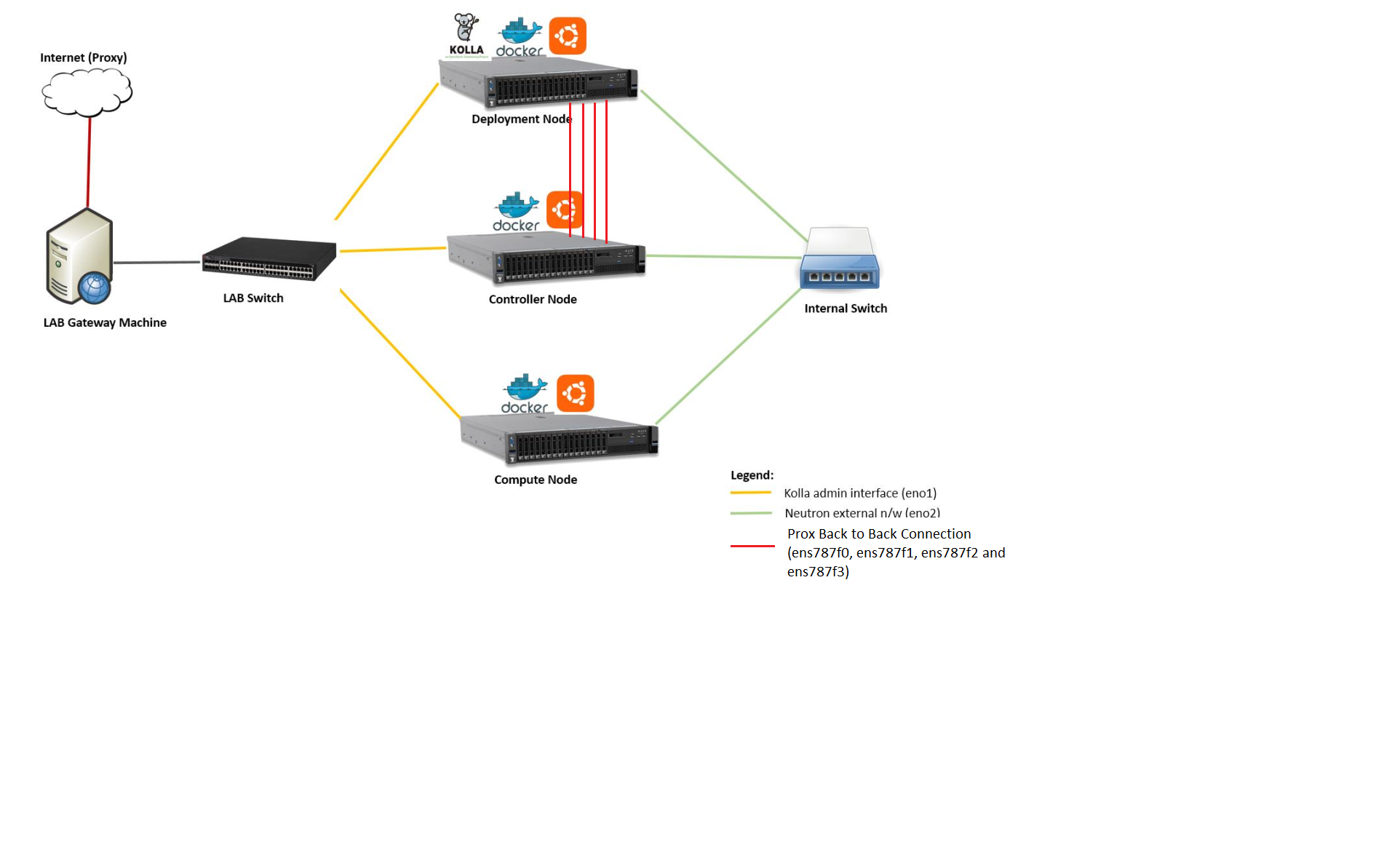

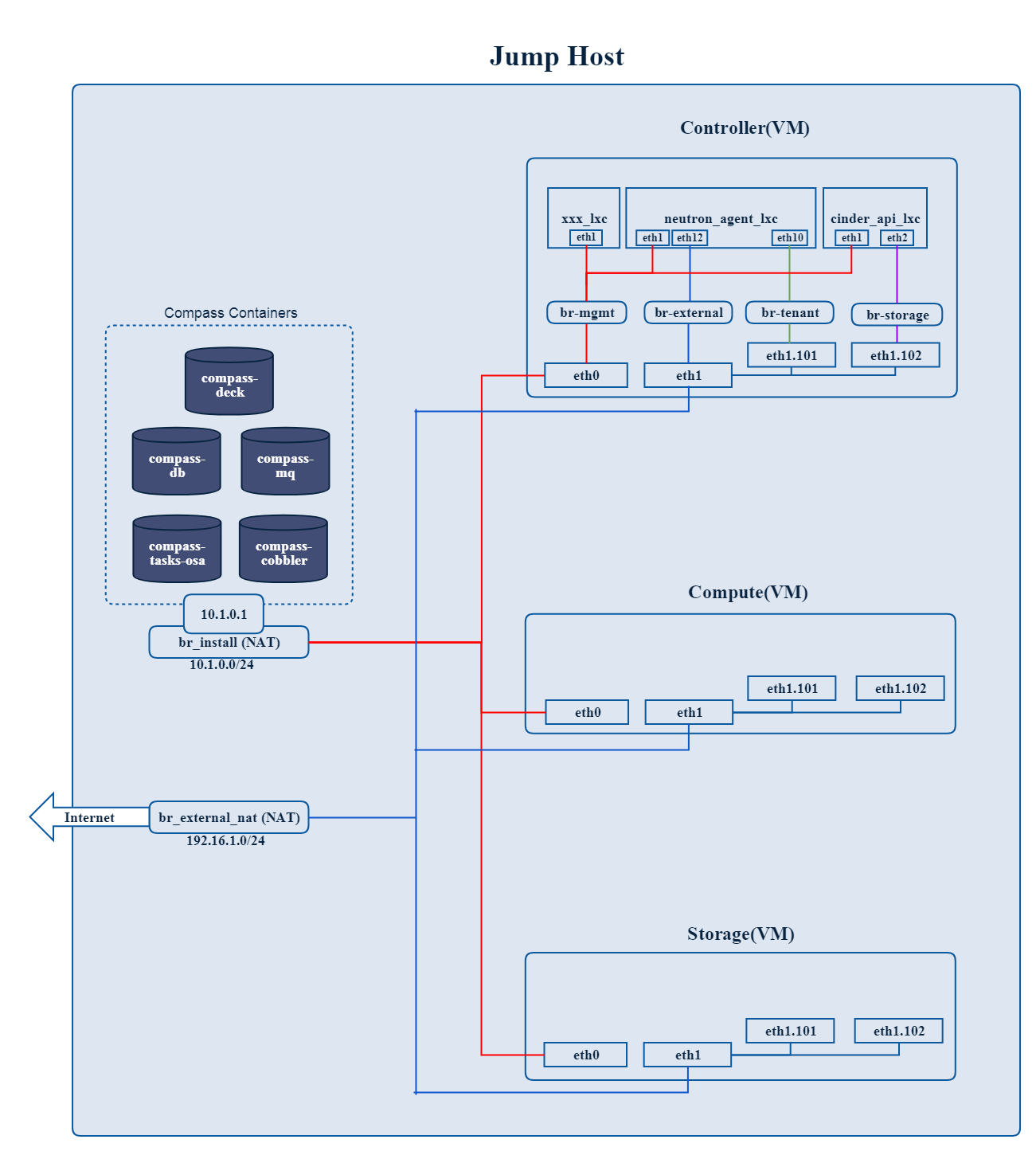

A typical OPNFV deployment starts with three controller nodes running in a high availability configuration including control plane components from OpenStack, SDN controllers, etc. and a minimum of two compute nodes for deployment of workloads (VNFs). A detailed description of the hardware requirements required to support the 5 node configuration can be found in pharos specification: Pharos Project

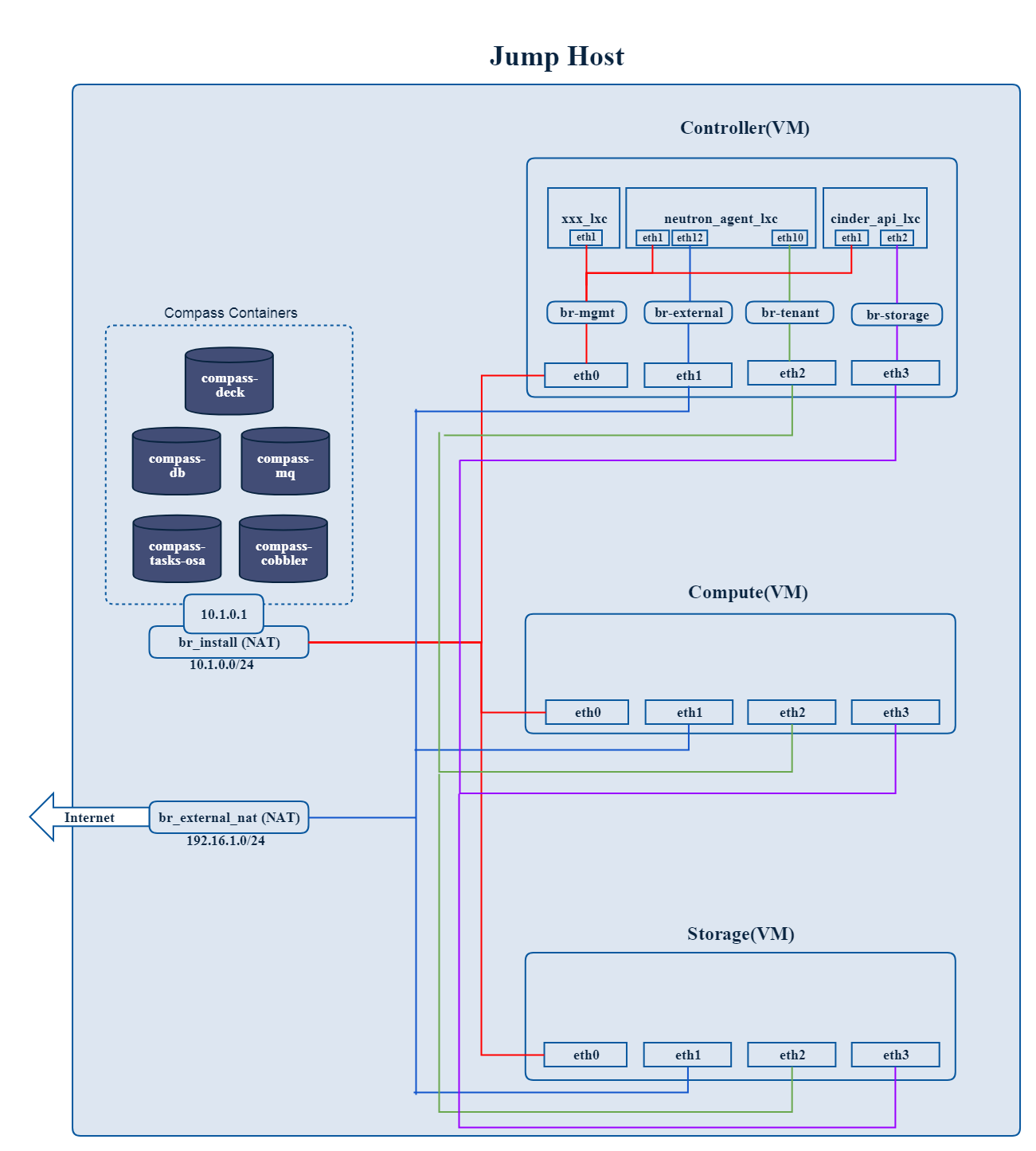

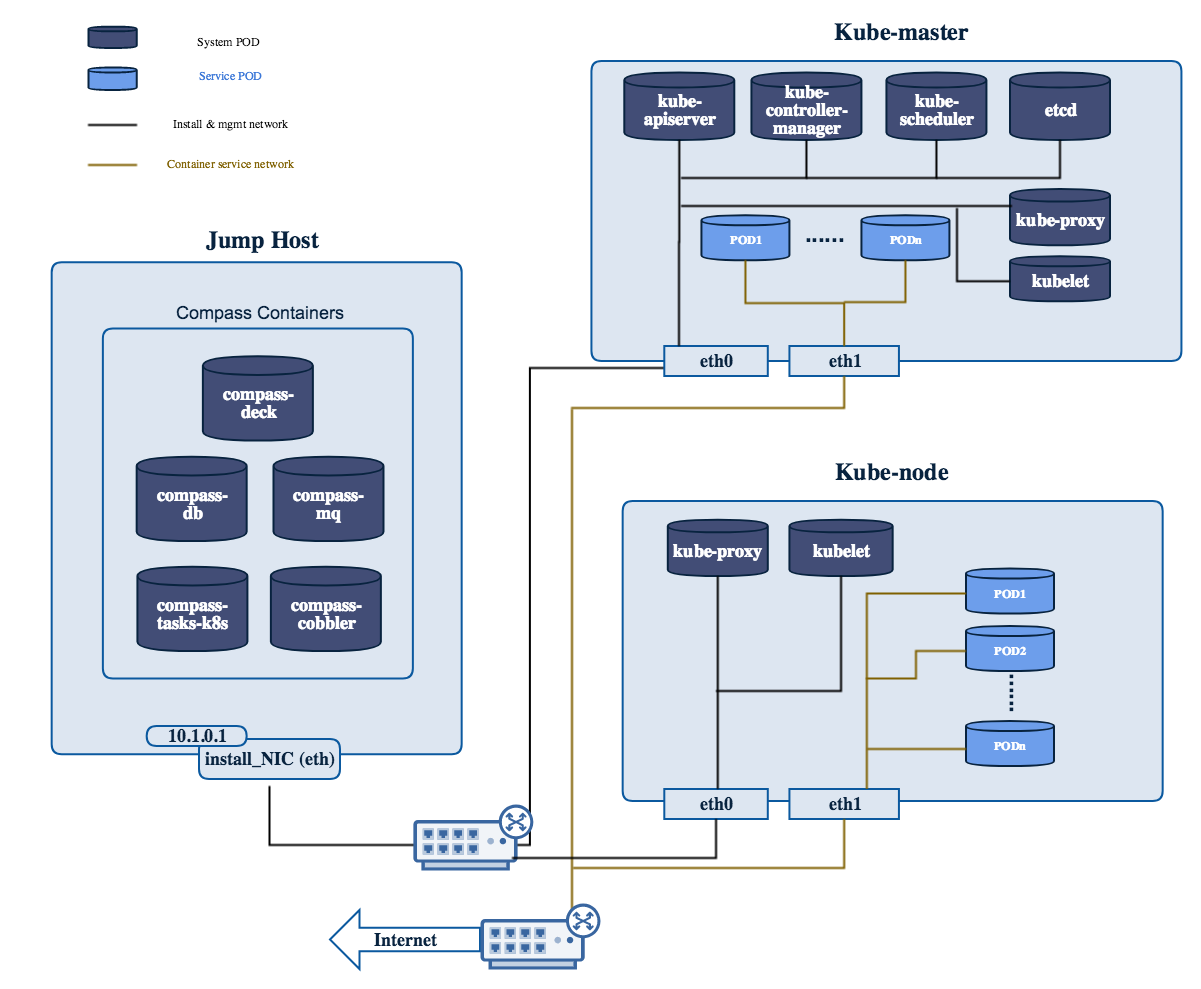

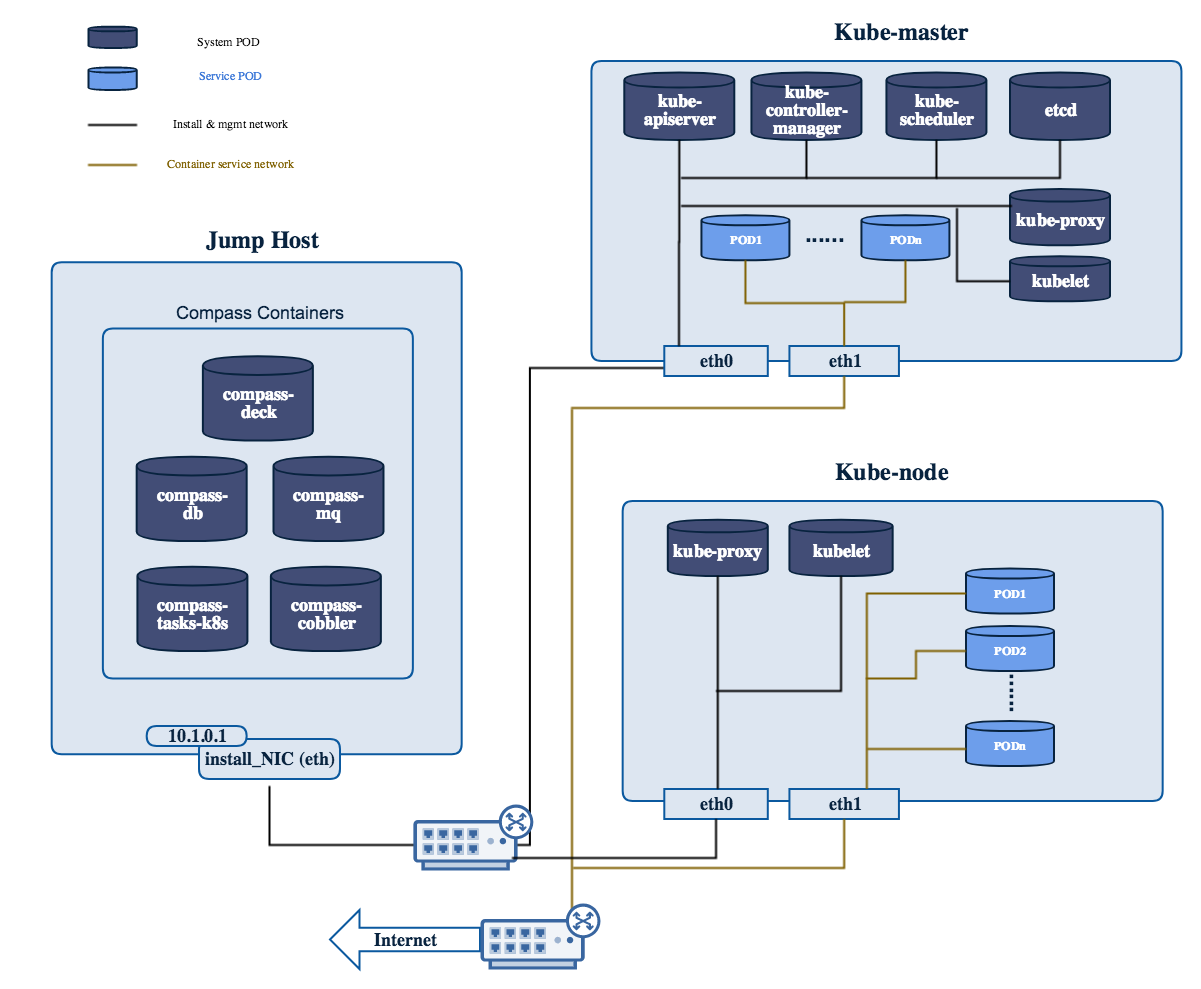

In addition to the deployment on a highly available physical infrastructure, OPNFV can be deployed for development and lab purposes in a virtual environment. In this case each of the hosts is provided by a virtual machine and allows control and workload placement using nested virtualization.

The initial deployment is done using a staging server, referred to as the “jumphost”. This server-either physical or virtual-is first installed with the installation program that then installs OpenStack and other components on the controller nodes and compute nodes. See the OPNFV User Guide & Configuration Guide for more details.

The OPNFV community has set out to address the needs of virtualization in the carrier network and as such platform validation and measurements are a cornerstone to the iterative releases and objectives.

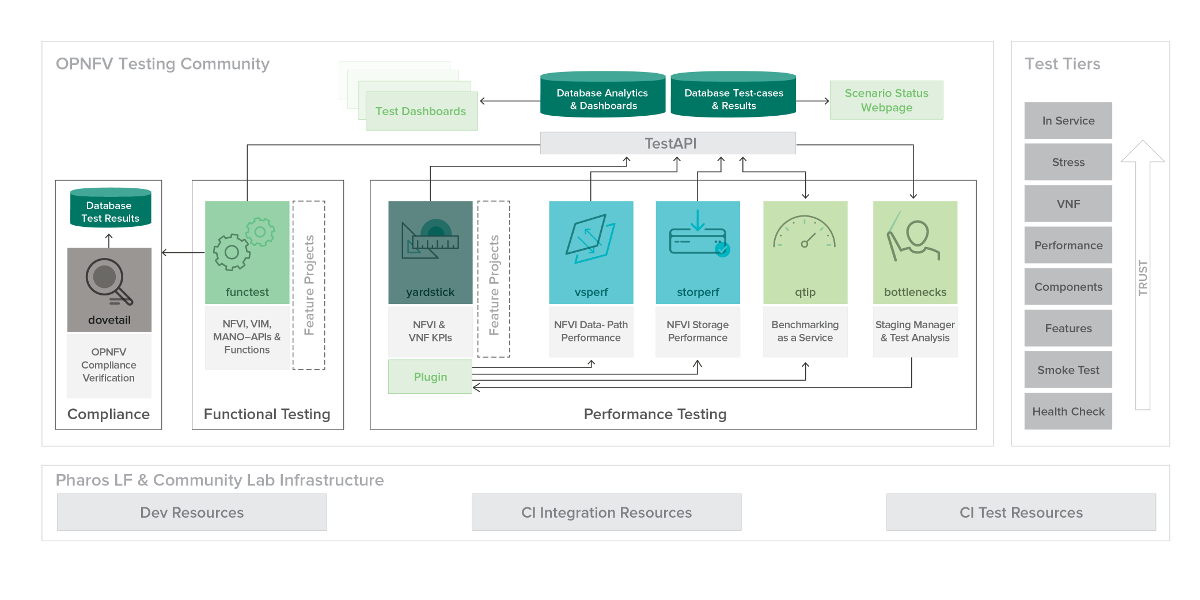

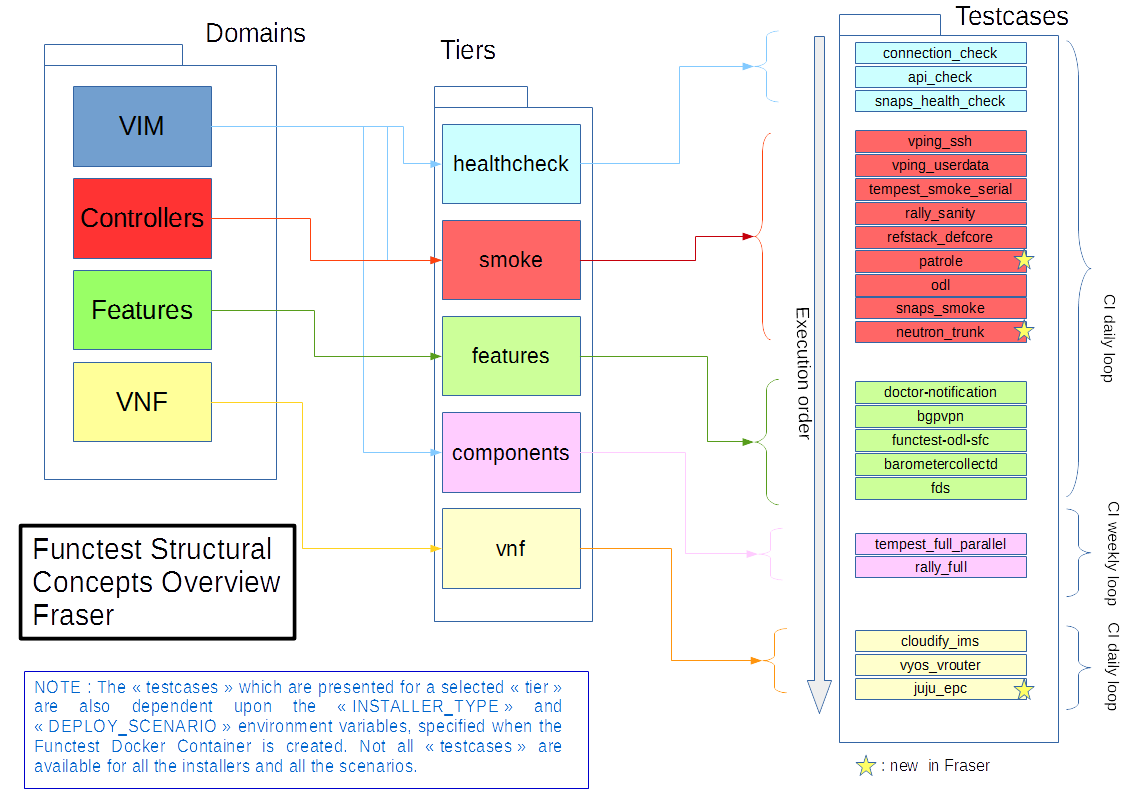

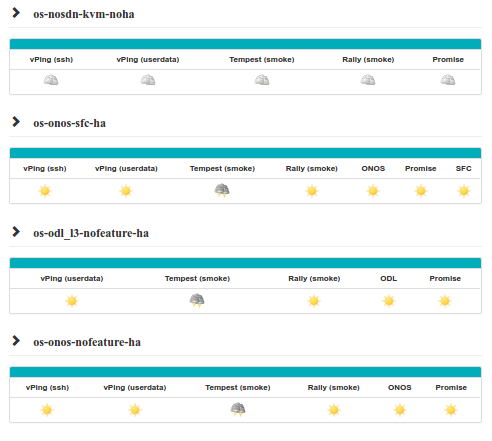

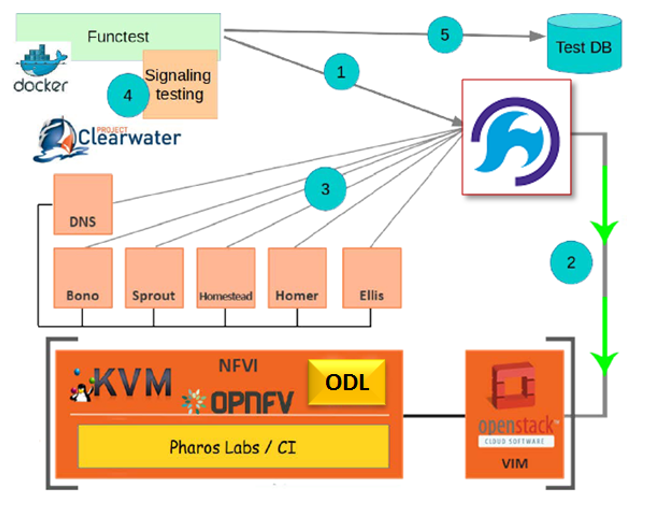

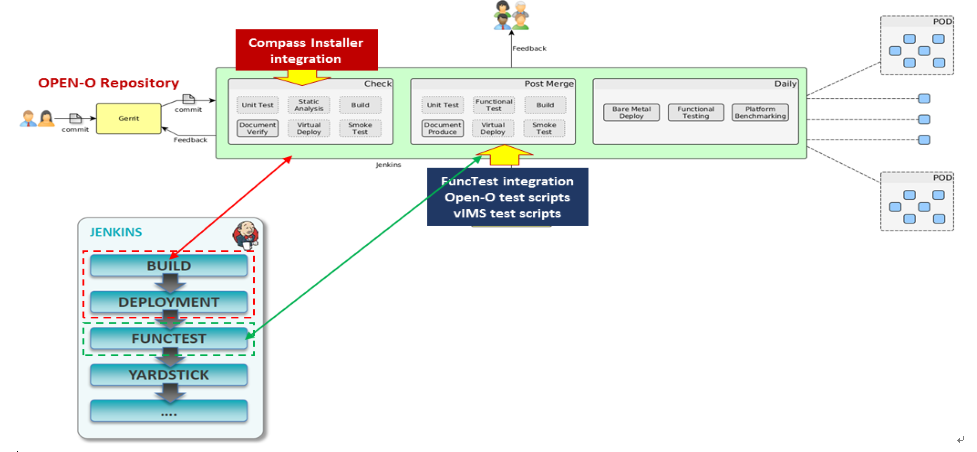

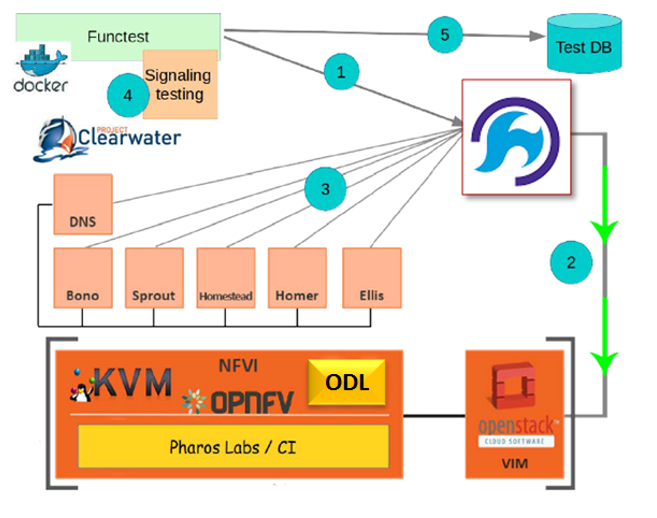

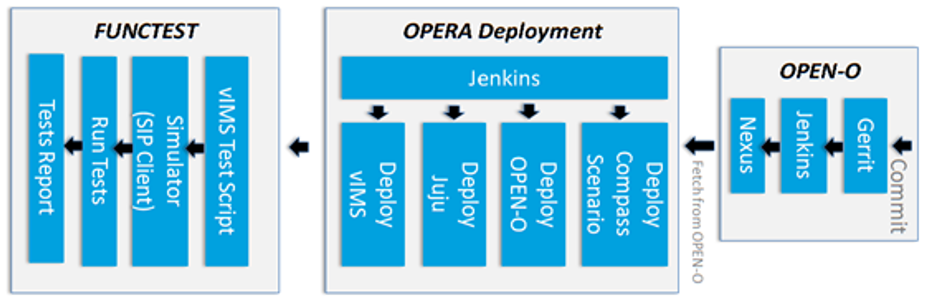

To simplify the complex task of feature, component and platform validation and characterization the testing community has established a fully automated method for addressing all key areas of platform validation. This required the integration of a variety of testing frameworks in our CI systems, real time and automated analysis of results, storage and publication of key facts for each run as shown in the following diagram.

The OPNFV community relies on its testing community to establish release criteria for each OPNFV release. With each release cycle the testing criteria become more stringent and better representative of our feature and resiliency requirements. Each release establishes a set of deployment scenarios to validate, the testing infrastructure and test suites need to accommodate these features and capabilities.

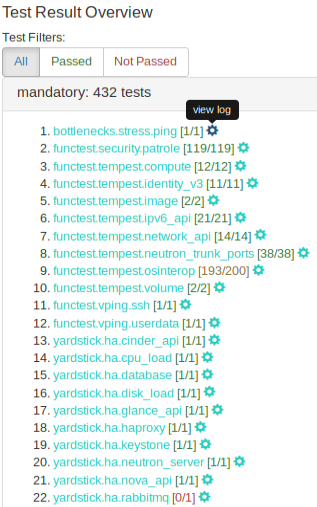

The release criteria as established by the testing teams include passing a set of test cases derived from the functional testing project ‘functest,’ a set of test cases derived from our platform system and performance test project ‘yardstick,’ and a selection of test cases for feature capabilities derived from other test projects such as bottlenecks, vsperf, cperf and storperf. The scenario needs to be able to be deployed, pass these tests, and be removed from the infrastructure iteratively in order to fulfill the release criteria.

Functest provides a functional testing framework incorporating a number of test suites and test cases that test and verify OPNFV platform functionality. The scope of Functest and relevant test cases can be found in the Functest User Guide

Functest provides both feature project and component test suite integration, leveraging OpenStack and SDN controllers testing frameworks to verify the key components of the OPNFV platform are running successfully.

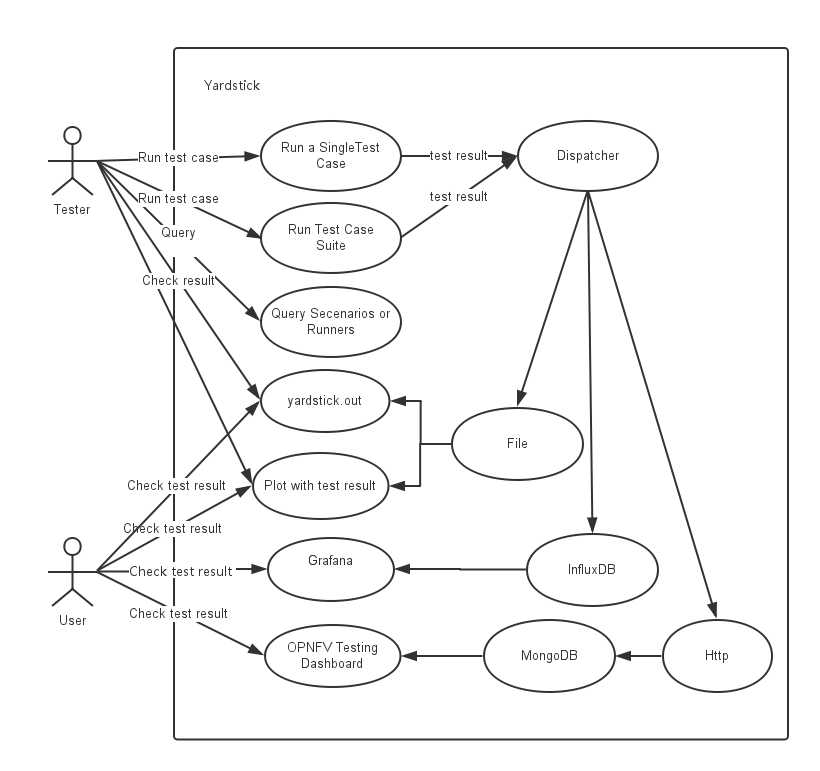

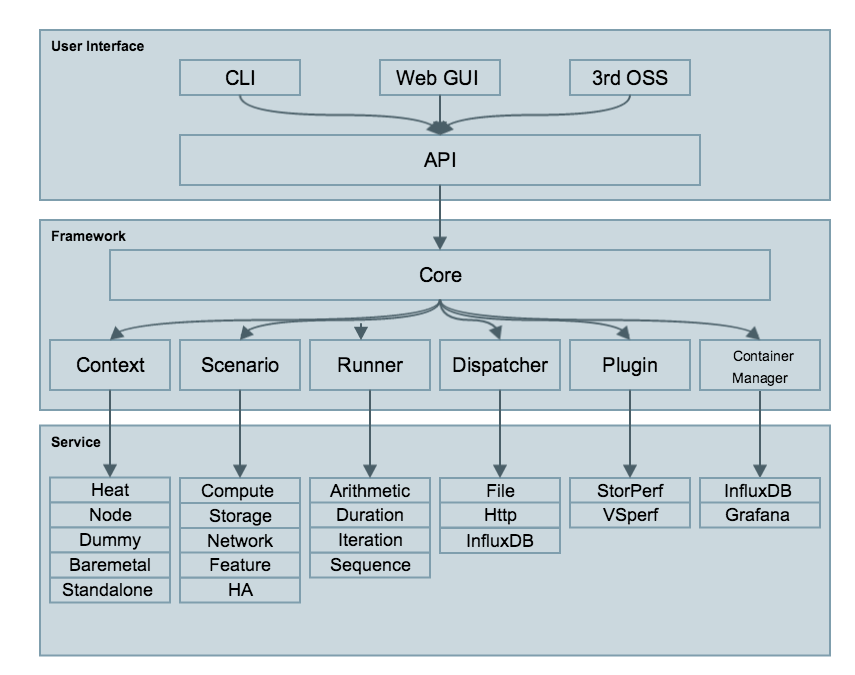

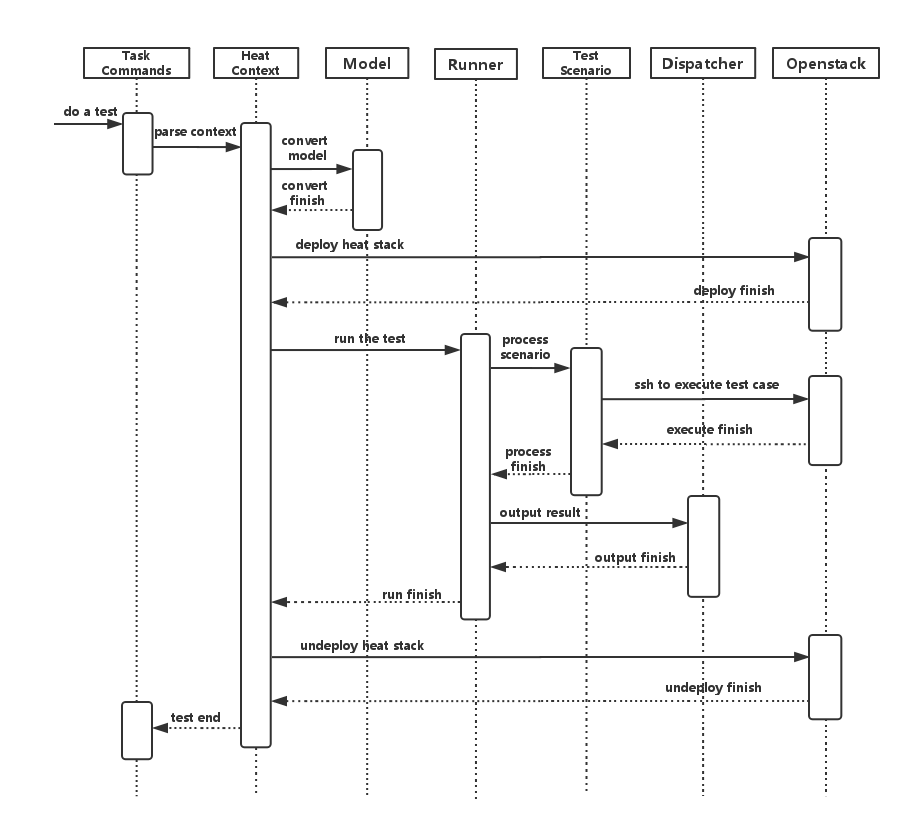

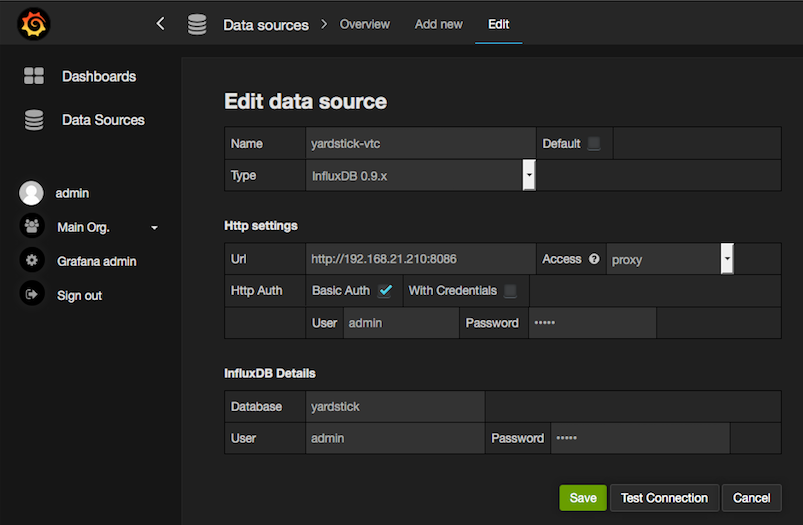

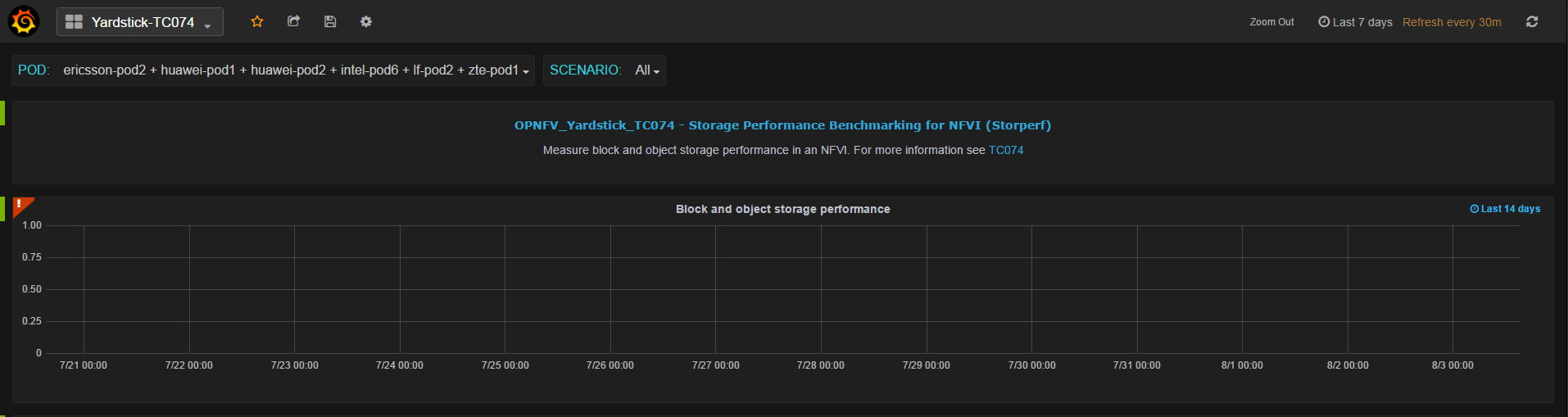

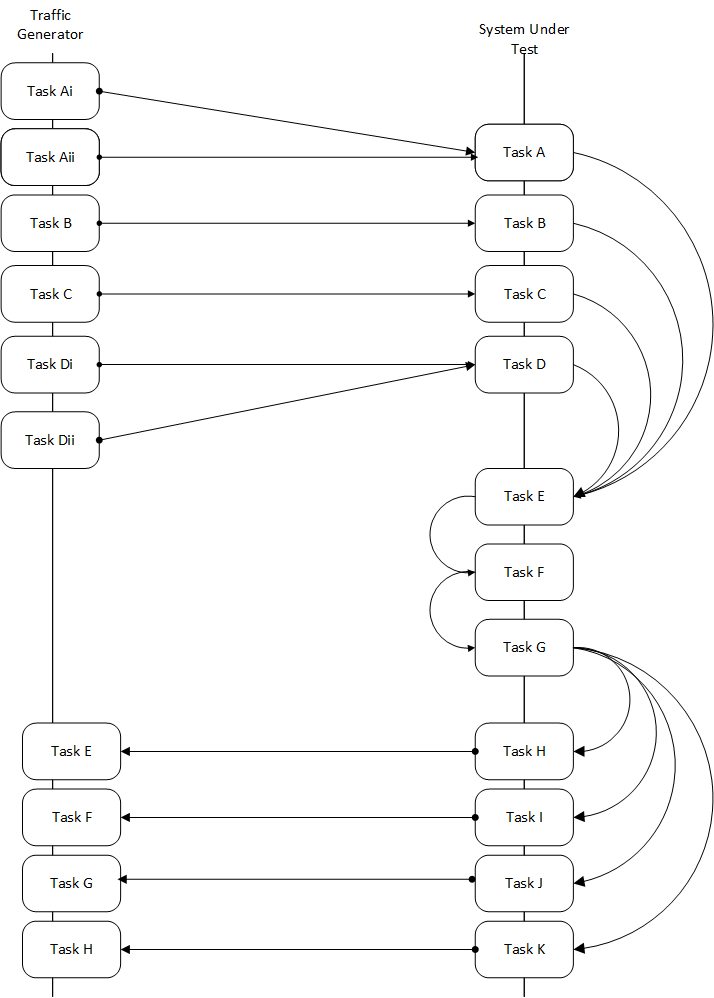

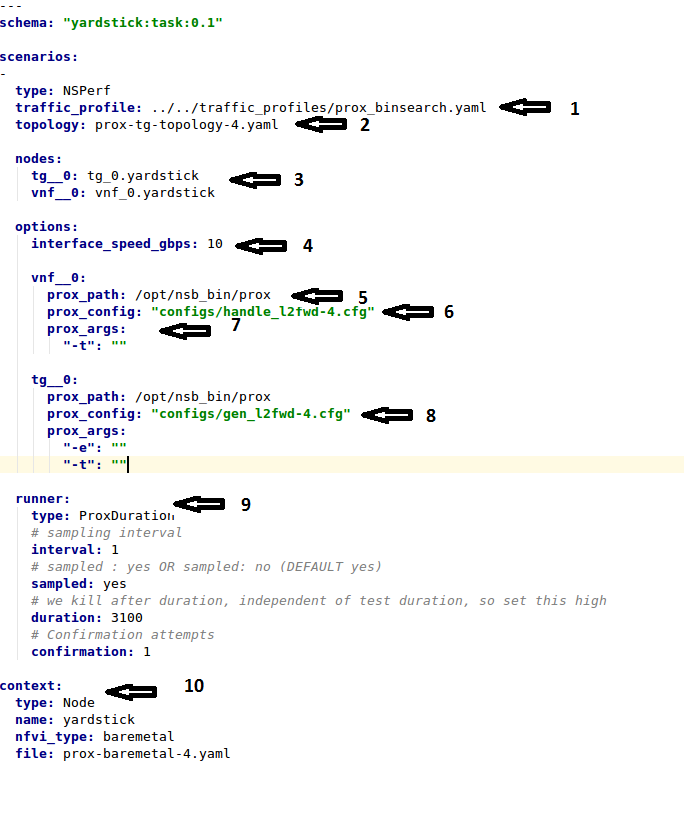

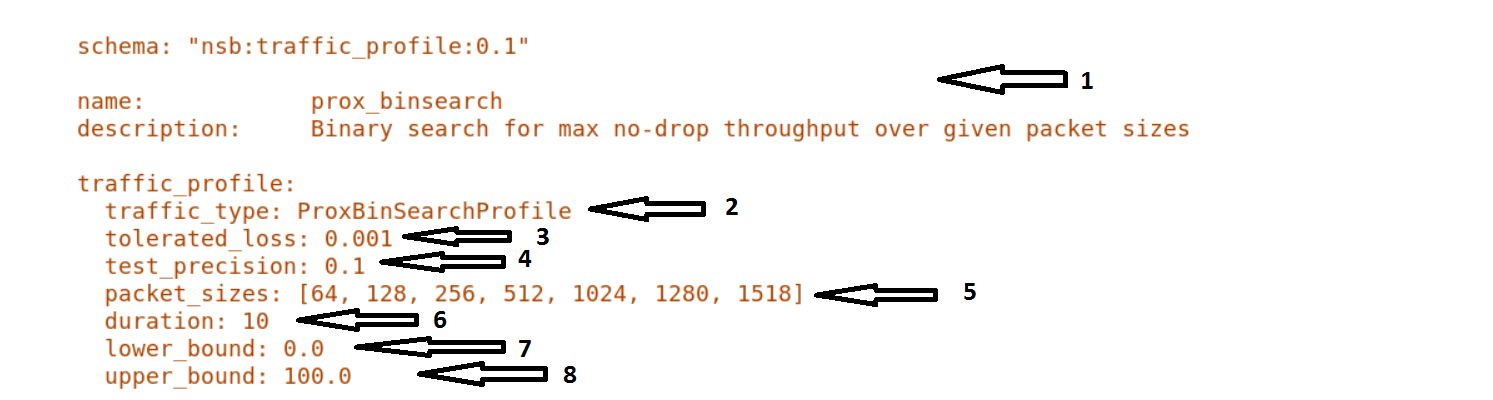

Yardstick is a testing project for verifying the infrastructure compliance when running VNF applications. Yardstick benchmarks a number of characteristics and performance vectors on the infrastructure making it a valuable pre-deployment NFVI testing tools.

Yardstick provides a flexible testing framework for launching other OPNFV testing projects.

There are two types of test cases in Yardstick:

The OPNFV community is developing a set of test suites intended to evaluate a set of reference behaviors and capabilities for NFV systems developed externally from the OPNFV ecosystem to evaluate and measure their ability to provide the features and capabilities developed in the OPNFV ecosystem.

The Dovetail project will provide a test framework and methodology able to be used on any NFV platform, including an agreed set of test cases establishing an evaluation criteria for exercising an OPNFV compatible system. The Dovetail project has begun establishing the test framework and will provide a preliminary methodology for the Fraser release. Work will continue to develop these test cases to establish a stand alone compliance evaluation solution in future releases.

Besides the test suites and cases for release verification, additional testing is performed to validate specific features or characteristics of the OPNFV platform. These testing framework and test cases may include some specific needs; such as extended measurements, additional testing stimuli, or tests simulating environmental disturbances or failures.

These additional testing activities provide a more complete evaluation of the OPNFV platform. Some of the projects focused on these testing areas include:

Bottlenecks provides a framework to find system limitations and bottlenecks, providing root cause isolation capabilities to facilitate system evaluation.

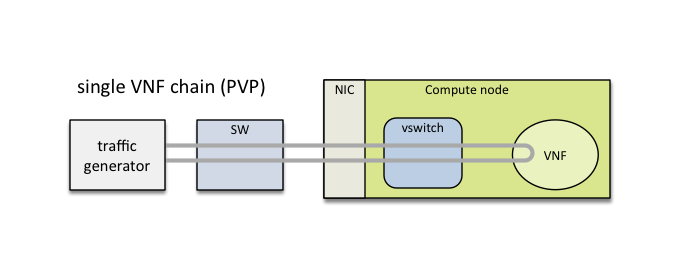

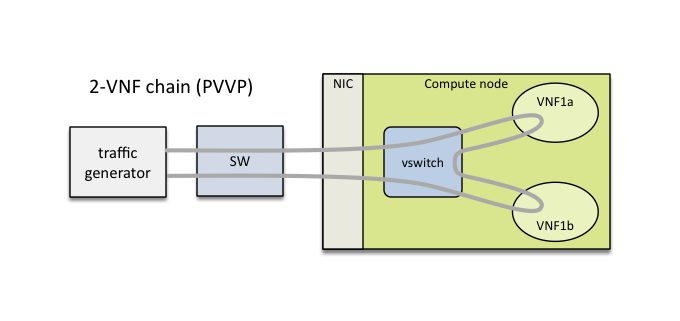

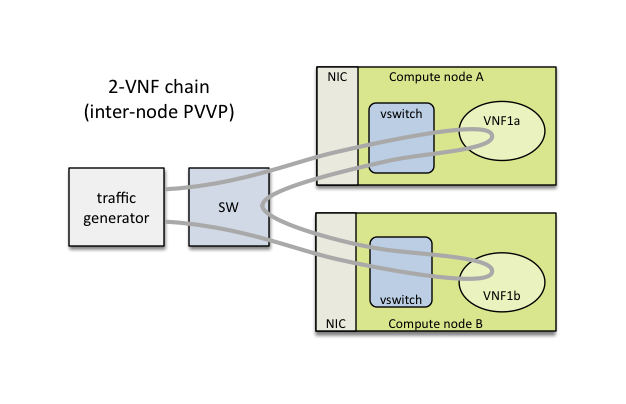

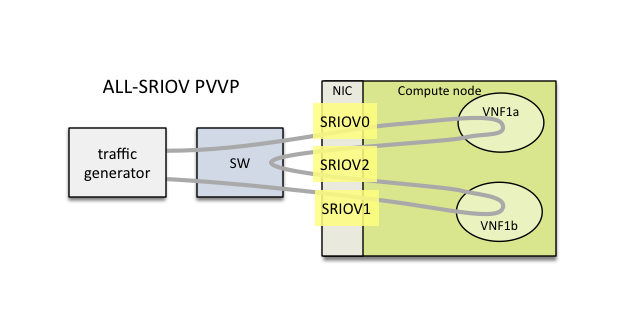

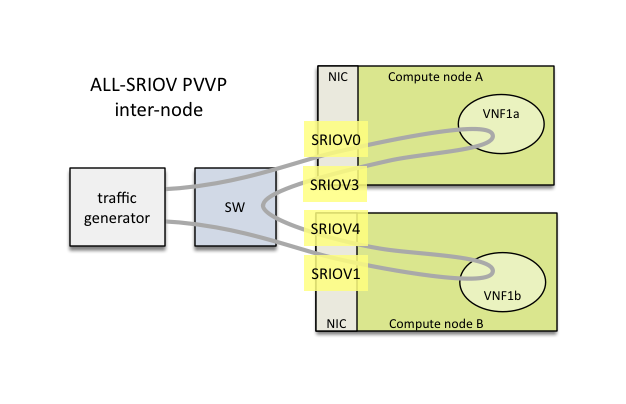

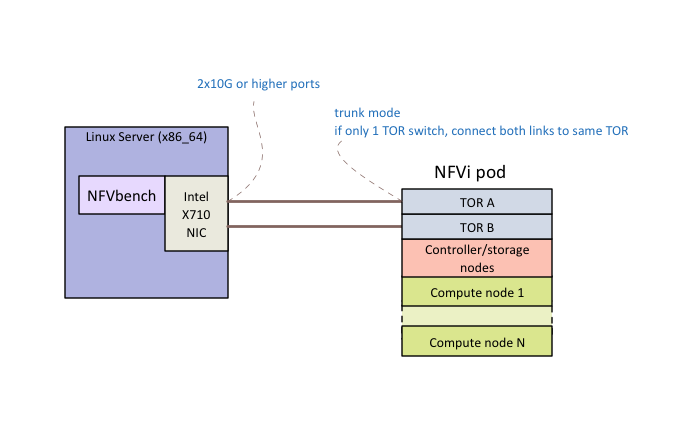

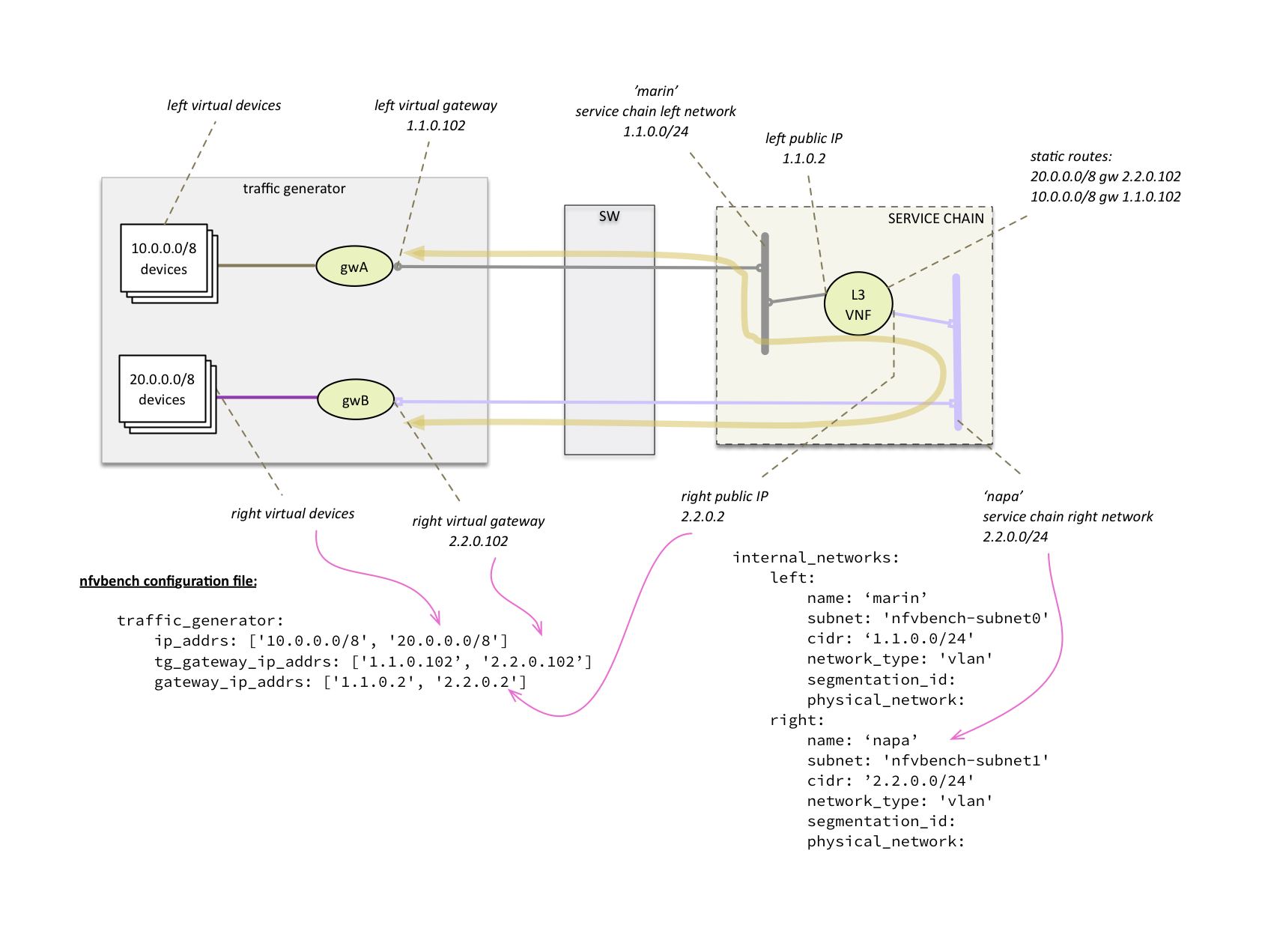

NFVbench is a lightweight end-to-end dataplane benchmarking framework project. It includes traffic generator(s) and measures a number of packet performance related metrics.

QTIP boils down NFVI compute and storage performance into one single metric for easy comparison. QTIP crunches these numbers based on five different categories of compute metrics and relies on Storperf for storage metrics.

Storperf measures the performance of external block storage. The goal of this project is to provide a report based on SNIA’s (Storage Networking Industry Association) Performance Test Specification.

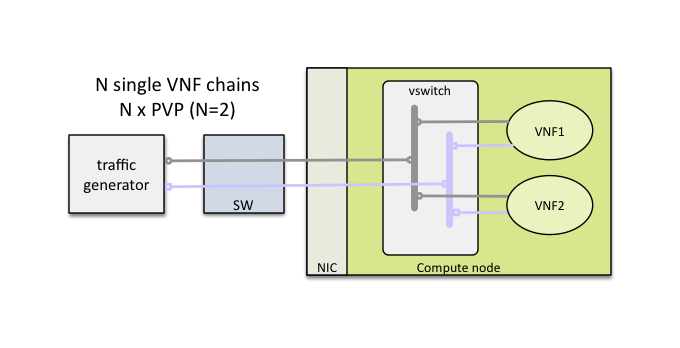

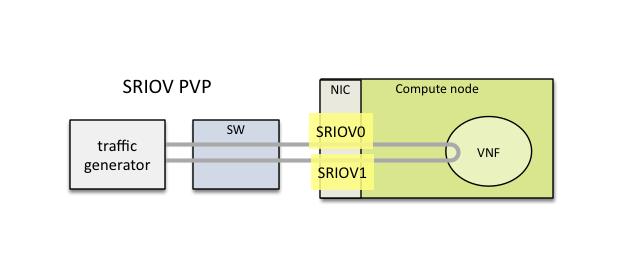

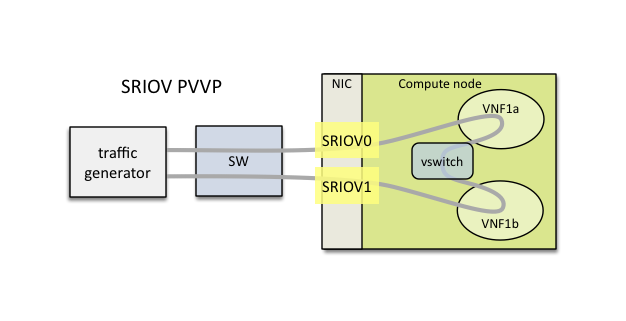

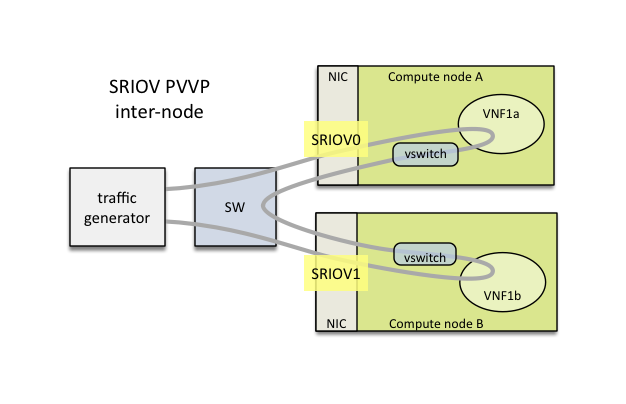

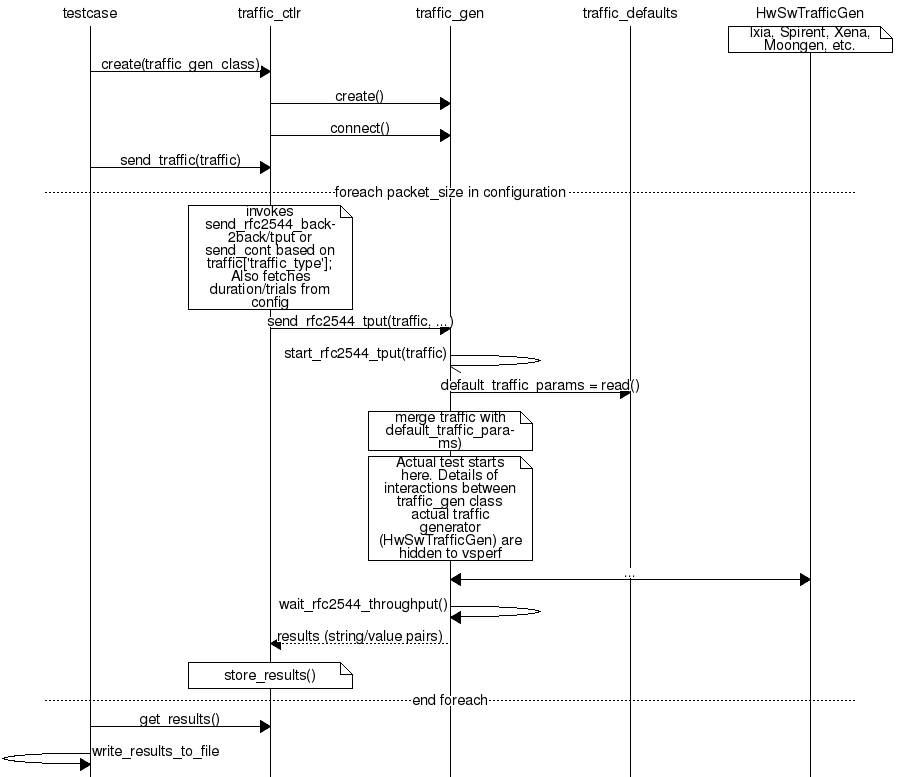

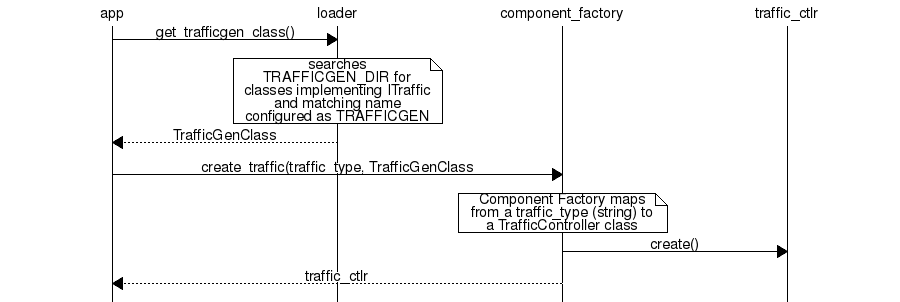

VSPERF provides an automated test-framework and comprehensive test suite for measuring data-plane performance of the NFVI including switching technology, physical and virtual network interfaces. The provided test cases with network topologies can be customized while also allowing individual versions of Operating System, vSwitch and hypervisor to be specified.

This an overview document for the installation of the Gambia release of OPNFV.

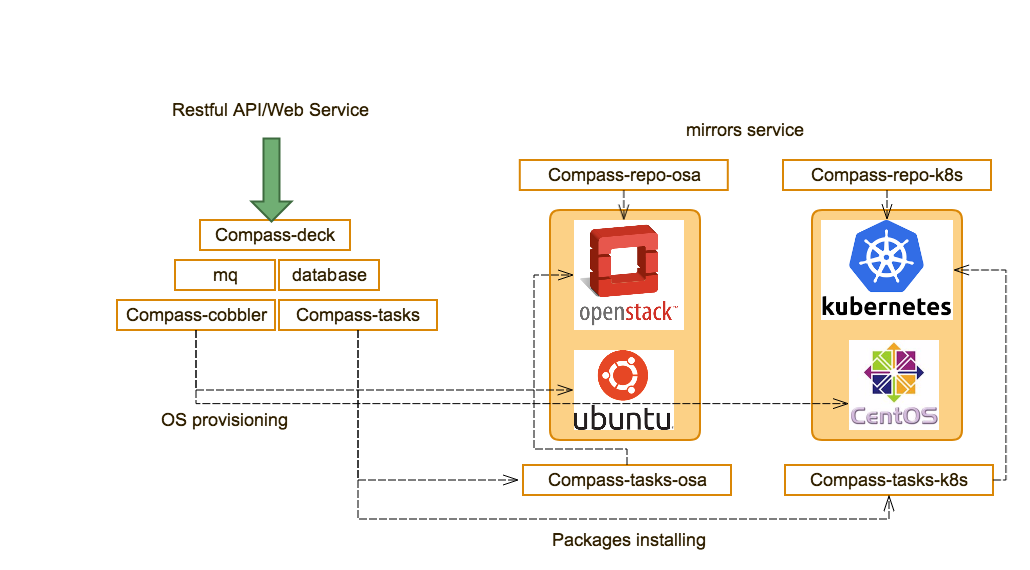

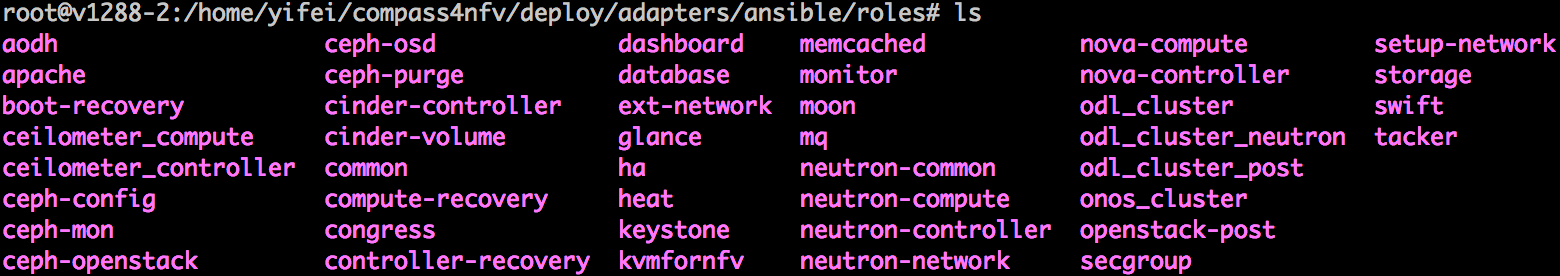

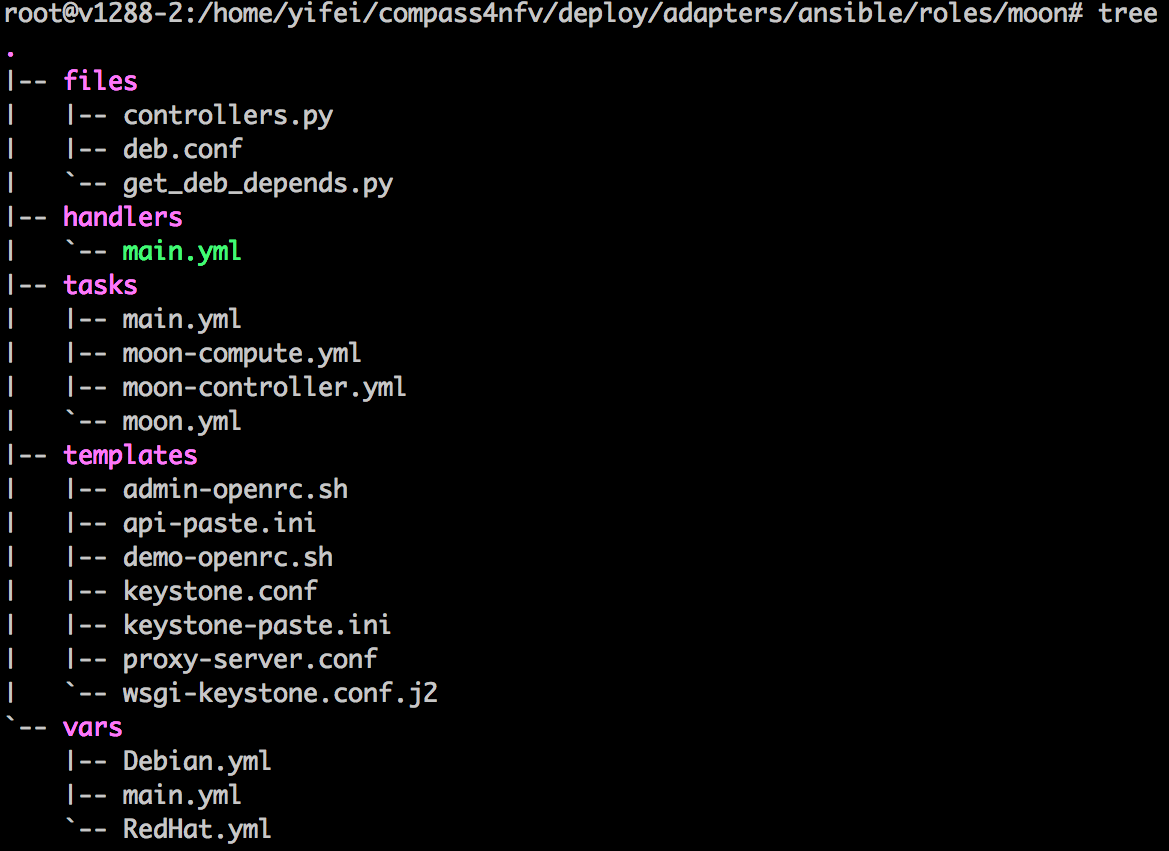

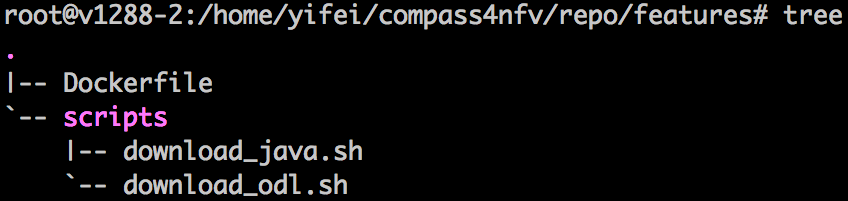

The Gambia release can be installed making use of any of the installer projects in OPNFV: Apex, Compass4Nfv or Fuel. Each installer provides the ability to install a common OPNFV platform as well as integrating additional features delivered through a variety of scenarios by the OPNFV community.

The OPNFV platform is comprised of a variety of upstream components that may be deployed on your infrastructure. A composition of components, tools and configurations is identified in OPNFV as a deployment scenario.

The various OPNFV scenarios provide unique features and capabilities that you may want to leverage, and it is important to understand your required target platform capabilities before installing and configuring your scenarios.

An OPNFV installation requires either a physical infrastructure environment as defined in the Pharos specification, or a virtual one. When configuring a physical infrastructure it is strongly advised to follow the Pharos configuration guidelines.

OPNFV scenarios are designed to host virtualised network functions (VNF’s) in a variety of deployment architectures and locations. Each scenario provides specific capabilities and/or components aimed at solving specific problems for the deployment of VNF’s.

A scenario may, for instance, include components such as OpenStack, OpenDaylight, OVS, KVM etc., where each scenario will include different source components or configurations.

To learn more about the scenarios supported in the Fraser release refer to the scenario description documents provided:

Detailed step by step instructions for working with an installation toolchain and installing the required scenario are provided by the installation projects. The projects providing installation support for the OPNFV Gambia release are: Apex, Compass4nfv and Fuel.

The instructions for each toolchain can be found in these links:

If you have elected to install the OPNFV platform using the deployment toolchain provided by OPNFV, your system will have been validated once the installation is completed. The basic deployment validation only addresses a small part of capabilities in the platform and you may want to execute more exhaustive tests. Some investigation will be required to select the right test suites to run on your platform.

Many of the OPNFV test project provide user-guide documentation and installation instructions in this document

OPNFV is a collaborative project aimed at providing a variety of virtualisation deployments intended to host applications serving the networking and carrier industries. This document provides guidance and instructions for using platform features designed to support these applications that are made available in the OPNFV Gambia release.

This document is not intended to replace or replicate documentation from other upstream open source projects such as KVM, OpenDaylight, OpenStack, etc., but to highlight the features and capabilities delivered through the OPNFV project.

OPNFV provides a suite of scenarios, infrastructure deployment options, which are able to be installed to host virtualised network functions (VNFs). This document intends to help users of the platform leverage the features and capabilities delivered by OPNFV.

OPNFVs’ Continuous Integration builds, deploys and tests combinations of virtual infrastructure components in what are defined as scenarios. A scenario may include components such as KVM, OpenDaylight, OpenStack, OVS, etc., where each scenario will include different source components or configurations. Scenarios are designed to enable specific features and capabilities in the platform that can be leveraged by the OPNFV user community.

The following links outline the feature deliverables from participating OPNFV projects in the Gambia release. Each of the participating projects provides detailed descriptions about the delivered features including use cases, implementation, and configuration specifics.

The following Configuration Guides and User Guides assume that the reader already has some knowledge about a given project’s specifics and deliverables. These Guides are intended to be used following the installation with an OPNFV installer to allow users to deploy and implement feature delivered by OPNFV.

If you are unsure about the specifics of a given project, please refer to the OPNFV wiki page at http://wiki.opnfv.org for more details.

Testing is one of the key activities in OPNFV and includes unit, feature, component, system level testing for development, automated deployment, performance characterization and stress testing.

Test projects are dedicated to provide frameworks, tooling and test-cases categorized as functional, performance or compliance testing. Test projects fulfill different roles such as verifying VIM functionality, benchmarking components and platforms or analysis of measured KPIs for OPNFV release scenarios.

Feature projects also provide their own test suites that either run independently or within a test project.

This document details the OPNFV testing ecosystem, describes common test components used by individual OPNFV projects and provides links to project specific documentation.

The OPNFV testing projects are represented in the following diagram:

The major testing projects are described in the table below:

| Project | Description |

|---|---|

| Bottlenecks | This project aims to find system bottlenecks by testing and verifying OPNFV infrastructure in a staging environment before committing it to a production environment. Instead of debugging a deployment in production environment, an automatic method for executing benchmarks which plans to validate the deployment during staging is adopted. This project forms a staging framework to find bottlenecks and to do analysis of the OPNFV infrastructure. |

| CPerf | SDN Controller benchmarks and performance testing, applicable to controllers in general. Collaboration of upstream controller testing experts, external test tool developers and the standards community. Primarily contribute to upstream/external tooling, then add jobs to run those tools on OPNFV’s infrastructure. |

| Dovetail | This project intends to define and provide a set of OPNFV related validation criteria/tests that will provide input for the OPNFV Complaince Verification Program. The Dovetail project is executed with the guidance and oversight of the Complaince and Certification (C&C) committee and work to secure the goals of the C&C committee for each release. The project intends to incrementally define qualification criteria that establish the foundations of how one is able to measure the ability to utilize the OPNFV platform, how the platform itself should behave, and how applications may be deployed on the platform. |

| Functest | This project deals with the functional testing of the VIM and NFVI. It leverages several upstream test suites (OpenStack, ODL, ONOS, etc.) and can be used by feature project to launch feature test suites in CI/CD. The project is used for scenario validation. |

| NFVbench | NFVbench is a compact and self contained data plane performance measurement tool for OpensStack based NFVi platforms. It is agnostic of the NFVi distribution, Neutron networking implementation and hardware. It runs on any Linux server with a DPDK compliant NIC connected to the NFVi platform data plane and bundles a highly efficient software traffic generator. Provides a fully automated measurement of most common packet paths at any level of scale and load using RFC-2544. Available as a Docker container with simple command line and REST interfaces. Easy to use as it takes care of most of the guesswork generally associated to data plane benchmarking. Can run in any lab or in production environments. |

| QTIP | QTIP as the project for “Platform Performance Benchmarking” in OPNFV aims to provide user a simple indicator for performance, supported by comprehensive testing data and transparent calculation formula. It provides a platform with common services for performance benchmarking which helps users to build indicators by themselves with ease. |

| StorPerf | The purpose of this project is to provide a tool to measure block and object storage performance in an NFVI. When complemented with a characterization of typical VF storage performance requirements, it can provide pass/fail thresholds for test, staging, and production NFVI environments. |

| VSPERF | VSPERF is an OPNFV project that provides an automated test-framework and comprehensive test suite based on Industry Test Specifications for measuring NFVI data-plane performance. The data-path includes switching technologies with physical and virtual network interfaces. The VSPERF architecture is switch and traffic generator agnostic and test cases can be easily customized. Software versions and configurations including the vSwitch (OVS or VPP) as well as the network topology are controlled by VSPERF (independent of OpenStack). VSPERF is used as a development tool for optimizing switching technologies, qualification of packet processing components and for pre-deployment evaluation of the NFV platform data-path. |

| Yardstick | The goal of the Project is to verify the infrastructure compliance when running VNF applications. NFV Use Cases described in ETSI GS NFV 001 show a large variety of applications, each defining specific requirements and complex configuration on the underlying infrastructure and test tools.The Yardstick concept decomposes typical VNF work-load performance metrics into a number of characteristics/performance vectors, which each of them can be represented by distinct test-cases. |

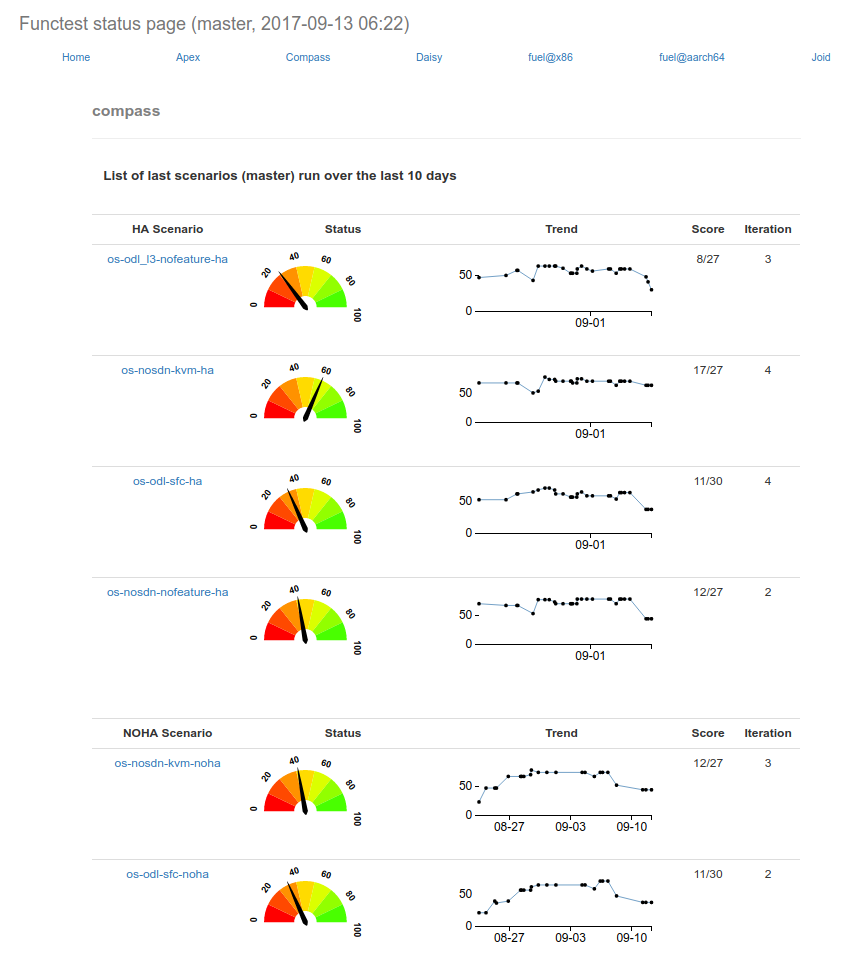

Any test project running in the global OPNFV lab infrastructure and is integrated with OPNFV CI can push test results to the community Test Database using a common Test API. This database can be used to track the evolution of testing and analyse test runs to compare results across installers, scenarios and between technically and geographically diverse hardware environments.

Results from the databse are used to generate a dashboard with the current test status for each testing project. Please note that you can also deploy the Test Database and Test API locally in your own environment.

The management of test results can be summarized as follows:

+-------------+ +-------------+ +-------------+

| | | | | |

| Test | | Test | | Test |

| Project #1 | | Project #2 | | Project #N |

| | | | | |

+-------------+ +-------------+ +-------------+

| | |

V V V

+---------------------------------------------+

| |

| Test Rest API front end |

| http://testresults.opnfv.org/test |

| |

+---------------------------------------------+

^ | ^

| V |

| +-------------------------+ |

| | | |

| | Test Results DB | |

| | Mongo DB | |

| | | |

| +-------------------------+ |

| |

| |

+----------------------+ +----------------------+

| | | |

| Testing Dashboards | | Test Landing page |

| | | |

+----------------------+ +----------------------+

A Mongo DB Database was introduced for the Brahmaputra release. The following collections are declared in this database:

- pods: the list of pods used for production CI

- projects: the list of projects providing test cases

- test cases: the test cases related to a given project

- results: the results of the test cases

- scenarios: the OPNFV scenarios tested in CI

This database can be used by any project through the Test API. Please note that projects may also use additional databases. The Test Database is mainly use to collect CI test results and generate scenario trust indicators. The Test Database is also cloned for OPNFV Plugfests in order to provide a private datastore only accessible to Plugfest participants.

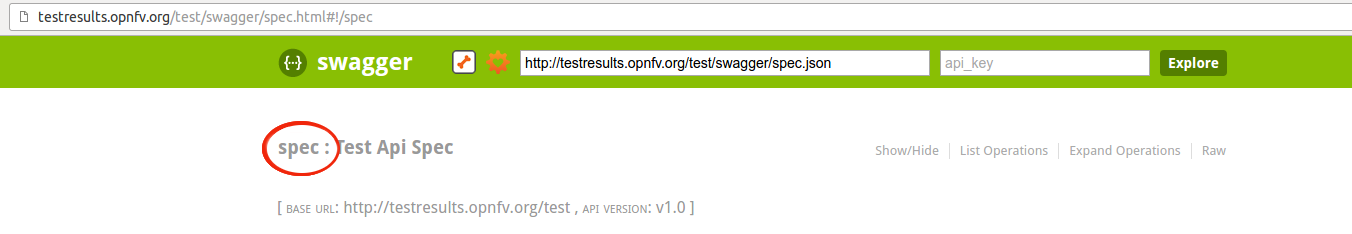

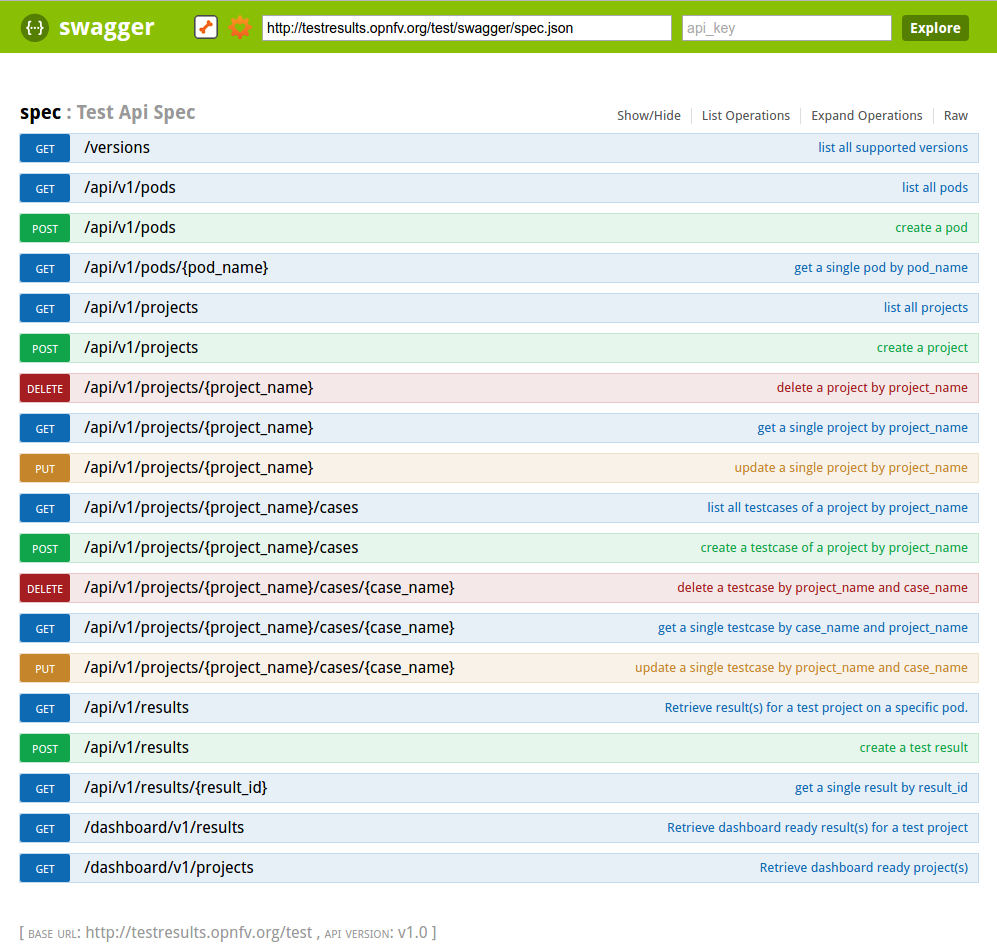

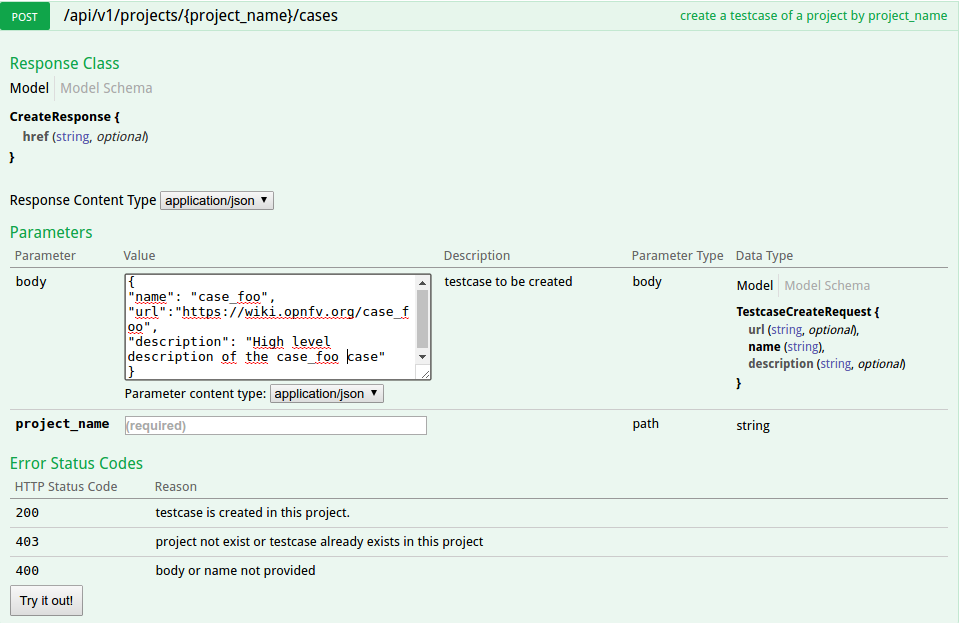

The Test API is used to declare pods, projects, test cases and test results. Pods correspond to a cluster of machines (3 controller and 2 compute nodes in HA mode) used to run the tests and are defined in the Pharos project. The results pushed in the database are related to pods, projects and test cases. Trying to push results generated from a non-referenced pod will return an error message by the Test API.

For detailed information, please go to http://artifacts.opnfv.org/releng/docs/testapi.html

The code of the Test API is hosted in the releng-testresults repository [TST2]. The static documentation of the Test API can be found at [TST3]. The Test API has been dockerized and may be installed locally in your lab.

The deployment of the Test API has been automated. A jenkins job manages:

- the unit tests of the Test API

- the creation of a new docker file

- the deployment of the new Test API

- the archive of the old Test API

- the backup of the Mongo DB

PUT/DELETE/POST operations of the TestAPI now require token based authorization. The token needs to be added in the request using a header ‘X-Auth-Token’ for access to the database.

e.g:

headers['X-Auth-Token']

The value of the header i.e the token can be accessed in the jenkins environment variable TestApiToken. The token value is added as a masked password.

headers['X-Auth-Token'] = os.environ.get('TestApiToken')

The above example is in Python. Token based authentication has been added so that only CI pods running Jenkins jobs can access the database. Please note that currently token authorization is implemented but is not yet enabled.

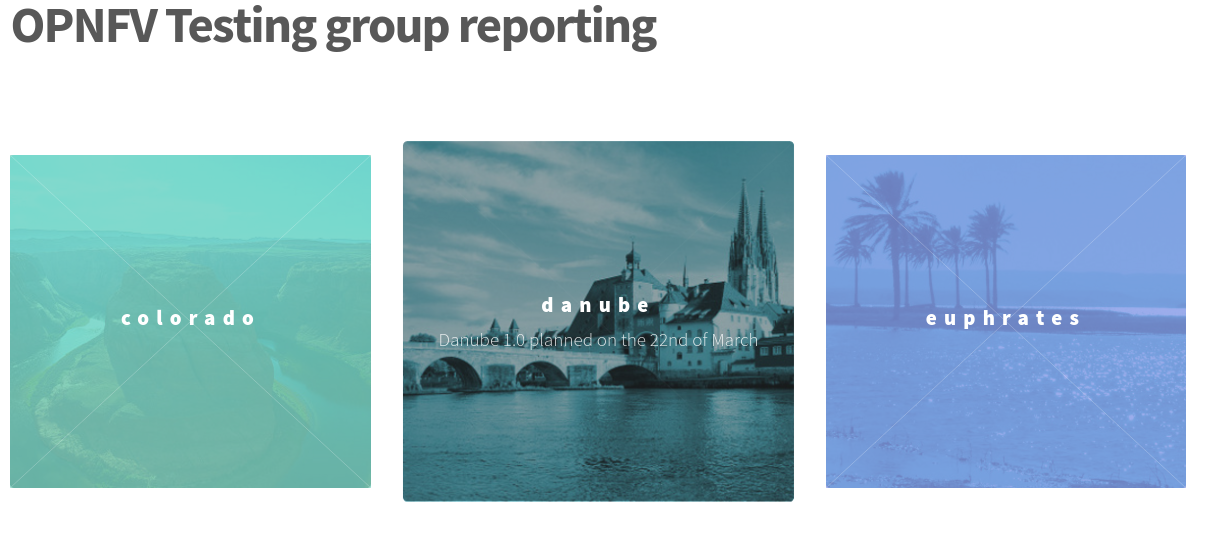

The reporting page for the test projects is http://testresults.opnfv.org/reporting/

This page provides reporting per OPNFV release and per testing project.

An evolution of the reporting page is planned to unify test reporting by creating a landing page that shows the scenario status in one glance (this information was previously consolidated manually on a wiki page). The landing page will be displayed per scenario and show:

- the status of the deployment

- the score from each test suite. There is no overall score, it is determined

by each test project. * a trust indicator

Until the Colorado release, each testing project managed the list of its test cases. This made it very hard to have a global view of the available test cases from the different test projects. A common view was possible through the API but it was not very user friendly. Test cases per project may be listed by calling:

http://testresults.opnfv.org/test/api/v1/projects/<project_name>/cases

with project_name: bottlenecks, functest, qtip, storperf, vsperf, yardstick

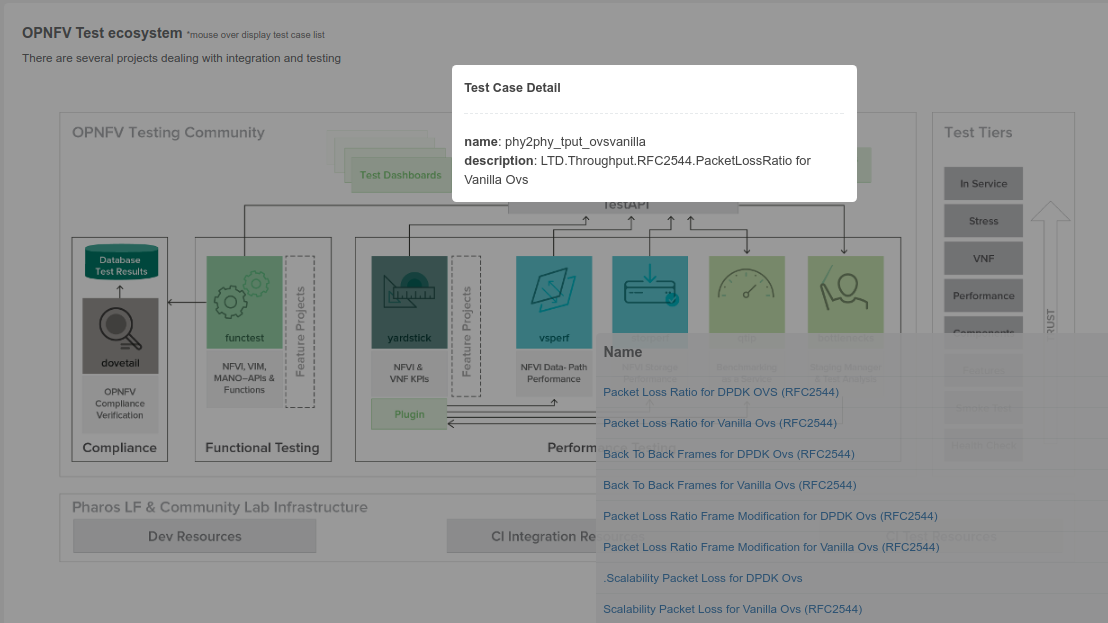

A test case catalog has now been realized [TST4]. Roll over the project then click to get the list of test cases, and then click on the case to get more details.

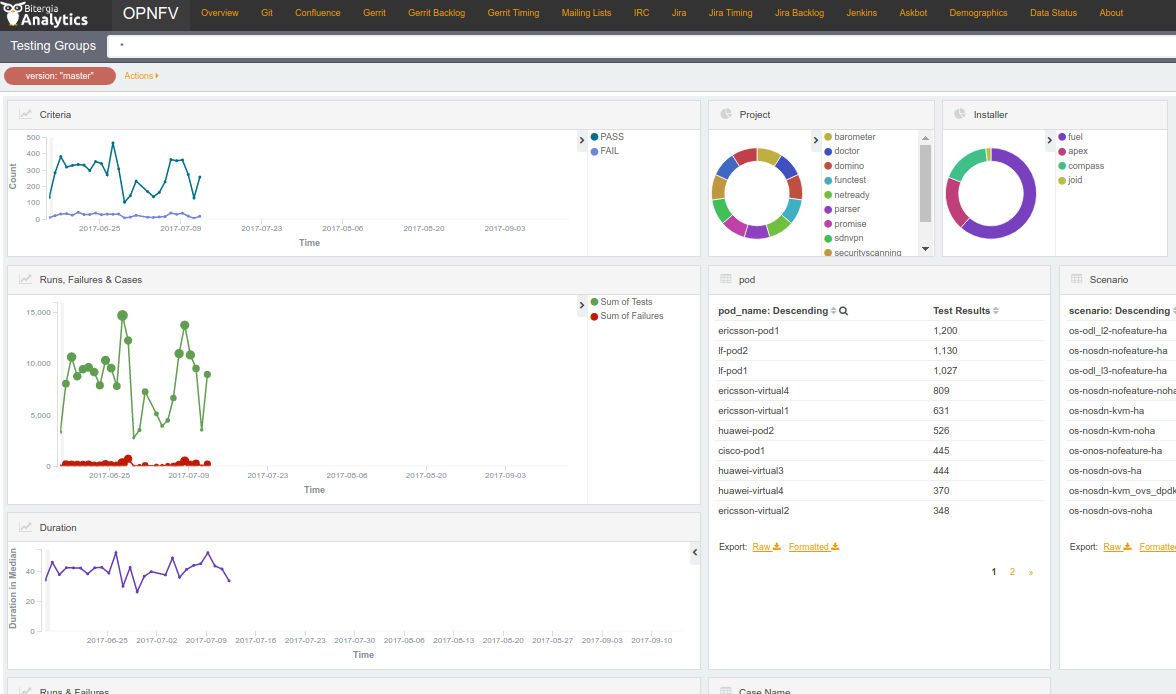

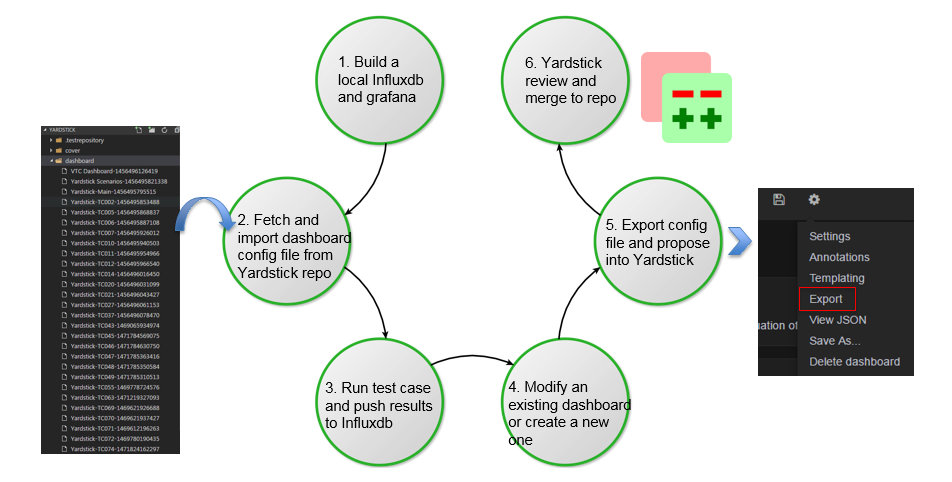

The Test Dashboard is used to provide a consistent view of the results collected in CI. The results shown on the dashboard are post processed from the Database, which only contains raw results. The dashboard can be used in addition to the reporting page (high level view) to allow the creation of specific graphs according to what the test owner wants to show.

In Brahmaputra, a basic dashboard was created in Functest. In Colorado, Yardstick used Grafana (time based graphs) and ELK (complex graphs). Since Danube, the OPNFV testing community decided to adopt the ELK framework and to use Bitergia for creating highly flexible dashboards [TST5].

Power consumption is a key driver for NFV. As an end user is interested to know which application is good or bad regarding power consumption and explains why he/she has to plug his/her smartphone every day, we would be interested to know which VNF is power consuming.

Power consumption is hard to evaluate empirically. It is however possible to collect information and leverage Pharos federation to try to detect some profiles/footprints. In fact thanks to CI, we know that we are running a known/deterministic list of cases. The idea is to correlate this knowledge with the power consumption to try at the end to find statistical biais.

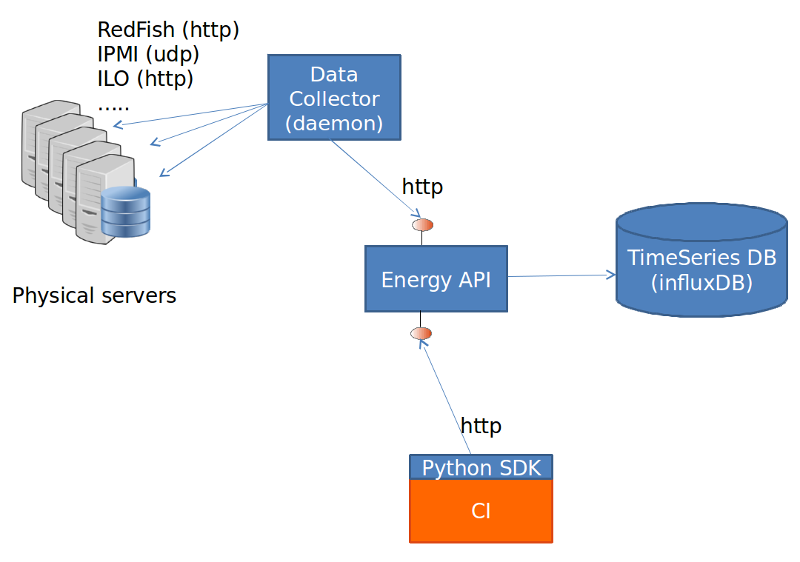

The energy recorder high level architecture may be described as follows:

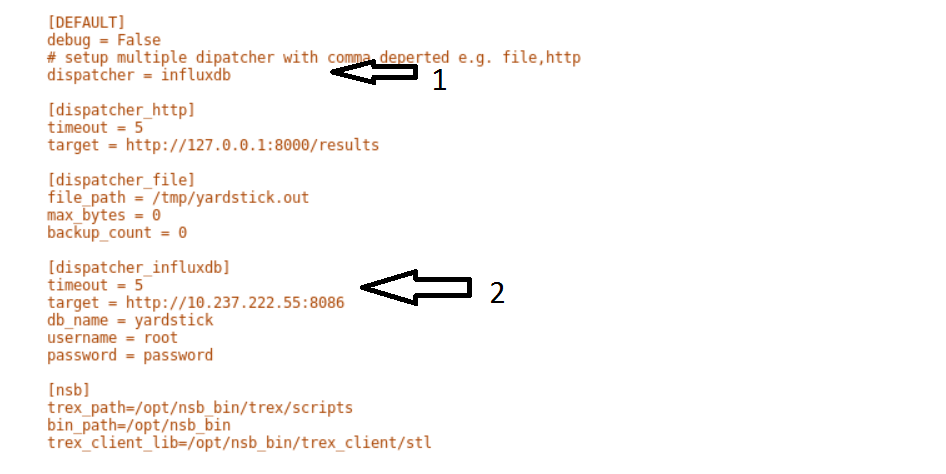

The energy monitoring system in based on 3 software components:

- Power info collector: poll server to collect instantaneous power consumption information

- Energy recording API + influxdb: On one leg receive servers consumption and

on the other, scenarios notfication. It then able to establish te correlation between consumption and scenario and stores it into a time-series database (influxdb) * Python SDK: A Python SDK using decorator to send notification to Energy recording API from testcases scenarios

It collects instantaneous power consumption information and send it to Event API in charge of data storing. The collector use different connector to read the power consumption on remote servers:

- IPMI: this is the basic method and is manufacturer dependent. Depending on manufacturer, refreshing delay may vary (generally for 10 to 30 sec.)

- RedFish: redfish is an industry RESTFUL API for hardware managment. Unfortunatly it is not yet supported by many suppliers.

- ILO: HP RESTFULL API: This connector support as well 2.1 as 2.4 version of HP-ILO

IPMI is supported by at least:

- HP

- IBM

- Dell

- Nokia

- Advantech

- Lenovo

- Huawei

Redfish API has been successfully tested on:

- HP

- Dell

- Huawei (E9000 class servers used in OPNFV Community Labs are IPMI 2.0

compliant and use Redfish login Interface through Browsers supporting JRE1.7/1.8)

Several test campaigns done with physical Wattmeter showed that IPMI results were notvery accurate but RedFish were. So if Redfish is available, it is highly recommended to use it.

To run the server power consumption collector agent, you need to deploy a docker container locally on your infrastructure.

This container requires:

- Connectivy on the LAN where server administration services (ILO, eDrac, IPMI,...) are configured and IP access to the POD’s servers

- Outgoing HTTP access to the Event API (internet)

Build the image by typing:

curl -s https://raw.githubusercontent.com/bherard/energyrecorder/master/docker/server-collector.dockerfile|docker build -t energyrecorder/collector -

Create local folder on your host for logs and config files:

mkdir -p /etc/energyrecorder

mkdir -p /var/log/energyrecorder

In /etc/energyrecorder create a configuration for logging in a file named collector-logging.conf:

curl -s https://raw.githubusercontent.com/bherard/energyrecorder/master/server-collector/conf/collector-logging.conf.sample > /etc/energyrecorder/collector-logging.conf

Check configuration for this file (folders, log levels.....) In /etc/energyrecorder create a configuration for the collector in a file named collector-settings.yaml:

curl -s https://raw.githubusercontent.com/bherard/energyrecorder/master/server-collector/conf/collector-settings.yaml.sample > /etc/energyrecorder/collector-settings.yaml

Define the “PODS” section and their “servers” section according to the environment to monitor. Note: The “environment” key should correspond to the pod name, as defined in the “NODE_NAME” environment variable by CI when running.

IMPORTANT NOTE: To apply a new configuration, you need to kill the running container an start a new one (see below)

To run the container, you have to map folder located on the host to folders in the container (config, logs):

docker run -d --name energy-collector --restart=always -v /etc/energyrecorder:/usr/local/energyrecorder/server-collector/conf -v /var/log/energyrecorder:/var/log/energyrecorder energyrecorder/collector

An event API to insert contextual information when monitoring energy (e.g. start Functest, start Tempest, destroy VM, ..) It is associated with an influxDB to store the power consumption measures It is hosted on a shared environment with the folling access points:

| Component | Connectivity |

|---|---|

| Energy recording API documentation | http://energy.opnfv.fr/resources/doc/ |

| influxDB (data) | http://energy.opnfv.fr:8086 |

In you need, you can also host your own version of the Energy recording API (in such case, the Python SDK may requires a settings update) If you plan to use the default shared API, following steps are not required.

First, you need to buid an image:

curl -s https://raw.githubusercontent.com/bherard/energyrecorder/master/docker/recording-api.dockerfile|docker build -t energyrecorder/api -

Create local folder on your host for logs and config files:

mkdir -p /etc/energyrecorder

mkdir -p /var/log/energyrecorder

mkdir -p /var/lib/influxdb

In /etc/energyrecorder create a configuration for logging in a file named webapp-logging.conf:

curl -s https://raw.githubusercontent.com/bherard/energyrecorder/master/recording-api/conf/webapp-logging.conf.sample > /etc/energyrecorder/webapp-logging.conf

Check configuration for this file (folders, log levels.....)

In /etc/energyrecorder create a configuration for the collector in a file named webapp-settings.yaml:

curl -s https://raw.githubusercontent.com/bherard/energyrecorder/master/recording-api/conf/webapp-settings.yaml.sample > /etc/energyrecorder/webapp-settings.yaml

Normaly included configuration is ready to use except username/passwer for influx (see run-container.sh bellow). Use here the admin user.

IMPORTANT NOTE: To apply a new configuration, you need to kill the running container an start a new one (see bellow)

To run the container, you have to map folder located on the host to folders in the container (config, logs):

docker run -d --name energyrecorder-api -p 8086:8086 -p 8888:8888 -v /etc/energyrecorder:/usr/local/energyrecorder/web.py/conf -v /var/log/energyrecorder/:/var/log/energyrecorder -v /var/lib/influxdb:/var/lib/influxdb energyrecorder/webapp admin-influx-user-name admin-password readonly-influx-user-name user-password

with

| Parameter name | Description |

|---|---|

|

Influx user with admin grants to create |

| Influx password to set to admin user | |

| Influx user with readonly grants to create | |

| Influx password to set to readonly user |

NOTE: Local folder /var/lib/influxdb is the location web influx data are stored. You may used anything else at your convience. Just remember to define this mapping properly when running the container.

a Python SDK - almost not intrusive, based on python decorator to trigger call to the event API.

It is currently hosted in Functest repo but if other projects adopt it, a dedicated project could be created and/or it could be hosted in Releng.

import the energy library:

import functest.energy.energy as energy

Notify that you want power recording in your testcase:

@energy.enable_recording

def run(self):

self.do_some_stuff1()

self.do_some_stuff2()

If you want to register additional steps during the scenarios you can to it in 2 different ways.

Notify step on method definition:

@energy.set_step("step1")

def do_some_stuff1(self):

...

@energy.set_step("step2")

def do_some_stuff2(self):

Notify directly from code:

@energy.enable_recording

def run(self):

Energy.set_step("step1")

self.do_some_stuff1()

...

Energy.set_step("step2")

self.do_some_stuff2()

Settings delivered in the project git are ready to use and assume that you will use the sahre energy recording API. If you want to use an other instance, you have to update the key “energy_recorder.api_url” in <FUNCTEST>/functest/ci/config_functest.yaml” by setting the proper hostname/IP

Here is an example of result comming from LF POD2. This sequence represents several CI runs in a raw. (0 power corresponds to hard reboot of the servers)

You may connect http://energy.opnfv.fr:3000 for more results (ask for credentials to infra team).

For more information or to participate in the OPNFV test community please see the following:

wiki: https://wiki.opnfv.org/testing

mailing list: test-wg@lists.opnfv.org

IRC channel: #opnfv-testperf

| Project | Documentation links |

|---|---|

| Bottlenecks | https://wiki.opnfv.org/display/bottlenecks/Bottlenecks |

| CPerf | https://wiki.opnfv.org/display/cperf |

| Dovetail | https://wiki.opnfv.org/display/dovetail |

| Functest | https://wiki.opnfv.org/display/functest/ |

| NFVbench | https://wiki.opnfv.org/display/nfvbench/ |

| QTIP | https://wiki.opnfv.org/display/qtip |

| StorPerf | https://wiki.opnfv.org/display/storperf/Storperf |

| VSPERF | https://wiki.opnfv.org/display/vsperf |

| Yardstick | https://wiki.opnfv.org/display/yardstick/Yardstick |

[TST1]: OPNFV web site

[TST2]: TestAPI code repository link in releng-testresults

[TST3]: TestAPI autogenerated documentation

[TST4]: Testcase catalog

[TST5]: Testing group dashboard

This page provides the links to the installation, configuration and user guides of the different test projects.

For each testsuite, you can either setup teststory or testcase to run certain test. teststory comprises several testcases as a set in one configuration file. You could call teststory or testcase by using Bottlenecks user interfaces. Details will be shown in the following section.

Brahmaputra:

Colorado:

Danube:

Euphrates:

These enhancements and test cases help the end users to gain more comprehensive understanding of the SUT. Graphic reports of the system behavior additional to test cases are provided to indicate the confidence level of SUT. Installer-agnostic testing framework allow end user to do stress testing adaptively over either Open Source or commercial deployments.

| Release | Integrated Installer | Supported Testsuite |

| Brahmaputra | Fuel | Rubbos, VSTF |

| Colorado | Compass | Rubbos, VSTF |

| Danube | Compass | POSCA |

| Euphrates | Any | POSCA |

| Fraser | Any | POSCA |

| Gambia | Any | POSCA, kubestone |

| POSCA | 1 | posca_factor_ping |

| 2 | posca_factor_system_bandwidth | |

| 3 | posca_facotor_soak_througputs | |

| 4 | posca_feature_vnf_scale_up | |

| 5 | posca_feature_vnf_scale_out | |

| 6 | posca_factor_storperf | |

| 7 | posca_factor_multistack_storage_parallel | |

| 8 | posca_factor_multistack_storage | |

| 9 | posca_feature_moon_resources | |

| 10 | posca_feature_moon_tenants | |

| Kubestone | 1 | deployment_capacity |

As for the abandoned test suite in the previous Bottlenecks releases, please refer to http://docs.opnfv.org/en/stable-danube/submodules/bottlenecks/docs/testing/user/userguide/deprecated.html.

The POSCA (Parametric Bottlenecks Testing Catalogue) test suite classifies the bottlenecks test cases and results into 5 categories. Then the results will be analyzed and bottlenecks will be searched among these categories.

The POSCA testsuite aims to locate the bottlenecks in parametric manner and to decouple the bottlenecks regarding the deployment requirements. The POSCA testsuite provides an user friendly way to profile and understand the E2E system behavior and deployment requirements.

Detailed workflow is illutrated below.

[Since Euphrates release, the docker-compose package is not required.]

if [ -d usr/local/bin/docker-compose ]; then

rm -rf usr/local/bin/docker-compose

fi

curl -L https://github.com/docker/compose/releases/download/1.11.0/docker-compose-`uname -s`-`uname -m` > /usr/local/bin/docker-compose

chmod +x /usr/local/bin/docker-compose

The test environment preparation, the installation of the testing tools, the execution of the tests and the reporting/analyisis of POSCA test suite are highly automated. A few steps are needed to run it locally.

In Euphrates, Bottlenecks has modified its framework to support installer-agnostic testing which means that test cases could be executed over different deployments.

mkdir /home/opnfv

cd /home/opnfv

git clone https://gerrit.opnfv.org/gerrit/bottlenecks

cd bottlenecks

. pre_virt_env.sh

Put OpenStack RC file (admin_rc.sh), os_carcert and pod.yaml (pod descrition file) in /tmp directory. Edit admin_rc.sh and add the following line

export OS_CACERT=/tmp/os_cacert

If you have deployed your openstack environment by compass, you could use the following command to get the required files. As to Fuel, Apex and JOID installers, we only provide limited support now for retrieving the configuration/description files. If you find that the following command can not do the magic, you should put the required files in /tmp manually.

bash ./utils/env_prepare/config_prepare.sh -i <installer> [--debug]

Note that if we execute the command above, then admin_rc.sh and pod.yml will be created automatically in /tmp folder along with the line export OS_CACERT=/tmp/os_cacert added in admin_rc.sh file.

bottlenecks testcase|teststory run <testname>

For the testcase command, testname should be as the same name of the test case configuration file located in testsuites/posca/testcase_cfg. For stress tests in Danube/Euphrates, testcase should be replaced by either posca_factor_ping or posca_factor_system_bandwidth. For the teststory command, a user can specify the test cases to be executed by defining it in a teststory configuration file located in testsuites/posca/testsuite_story. There is also an example there named posca_factor_test.

There are also other 2 ways to run test cases and test stories.

The first one is to use shell script.

bash run_tests.sh [-h|--help] -s <testsuite>|-c <testcase>

The second is to use python interpreter.

$REPORT=False

opts="--privileged=true -id"

docker_volume="-v /var/run/docker.sock:/var/run/docker.sock -v /tmp:/tmp"

docker run $opts --name bottlenecks-load-master $docker_volume opnfv/bottlenecks:latest /bin/bash

sleep 5

POSCA_SCRIPT="/home/opnfv/bottlenecks/testsuites/posca"

docker exec bottlenecks-load-master python ${POSCA_SCRIPT}/../run_posca.py testcase|teststory <testname> ${REPORT}

Bottlenecks uses ELK to illustrate the testing results. Asumming IP of the SUT (System Under Test) is denoted as ipaddr, then the address of Kibana is http://[ipaddr]:5601. One can visit this address to see the illustrations. Address for elasticsearch is http://[ipaddr]:9200. One can use any Rest Tool to visit the testing data stored in elasticsearch.

. rm_virt_env.sh

If you want to clean the dockers that established during the test, you can excute the additional commands below.

bash run_tests.sh --cleanup

Note that you can also add cleanup parameter when you run a test case. Then environment will be automatically cleaned up when completing the test.

POSCA test cases are runned by OPNFV CI now. See https://build.opnfv.org for details of the building jobs. Each building job is set up to execute a single test case. The test results/logs will be printed on the web page and reported automatically to community MongoDB. There are two ways to report the results.

bash run_tests.sh [-h|--help] -s <testsuite>|-c <testcase> --report

REPORT=True

opts="--privileged=true -id"

docker_volume="-v /var/run/docker.sock:/var/run/docker.sock -v /tmp:/tmp"

docker run $opts --name bottlenecks-load-master $docker_volume opnfv/bottlenecks:latest /bin/bash

sleep 5

REPORT="True"

POSCA_SCRIPT="/home/opnfv/bottlenecks/testsuites/posca"

docker exec bottlenecks_load-master python ${POSCA_SCRIPT}/../run_posca.py testcase|teststory <testcase> ${REPORT}

This document provides an overview of the results of test cases developed by the OPNFV Bottlenecks Project, executed on OPNFV community labs.

OPNFV CI(Continous Integration) system provides automated build, deploy and testing for the software developed in OPNFV. Unless stated, the reported tests are automated via Jenkins Jobs.

Test results are visible in the following dashboard:

| Bottlenecks POSCA Stress Test Traffic | |

| test case name | posca_factor_system_bandwith |

| description | Stress test regarding baseline of the system for a single user, i.e., a VM pair while increasing the package size |

| configuration |

stack number: 1 |

| test result | PKT loss rate, latency, throupht, cpu usage |

test_config:

tool: netperf

protocol: tcp

test_time: 20

tx_pkt_sizes: 64, 256, 1024, 4096, 8192, 16384, 32768, 65536

rx_pkt_sizes: 64, 256, 1024, 4096, 8192, 16384, 32768, 65536

cpu_load: 0.9

latency: 100000

runner_config:

dashboard: "y"

dashboard_ip:

stack_create: yardstick

yardstick_test_ip:

yardstick_test_dir: "samples"

yardstick_testcase: "netperf_bottlenecks"

| Bottlenecks POSCA Stress Test Ping | |

| test case name | posca_posca_ping |

| description | Stress test regarding life-cycle while using ping to validate the VM pairs constructions |

| configuration |

stack number: 5, 10, 20, 50 ... |

| test result | PKT loss rate, success rate, test time, latency |

load_manager:

scenarios:

tool: ping

test_times: 100

package_size:

num_stack: 5, 5

package_loss: 0

contexts:

stack_create: yardstick

flavor:

yardstick_test_ip:

yardstick_test_dir: "samples"

yardstick_testcase: "ping_bottlenecks"

dashboard:

dashboard: "y"

dashboard_ip:

| Bottlenecks POSCA Stress Test Storage | |

| test case name | posca_factor_storperf |

| description | Stress test regarding storage using Storperf |

| configuration |

|

| test result | Read / Write IOPS, Throughput, latency |

load_manager:

scenarios:

tool: storperf

| Bottlenecks POSCA Stress Test MultiStack Storage | |

| test case name | posca_factor_multistack_storage |

| description | Stress test regarding multistack storage using yardstick as a runner |

| configuration |

stack number: 5, 10, 20, 50 ... |

| test result | Read / Write IOPS, Throughput, latency |

load_manager:

scenarios:

tool: fio

test_times: 10

rw: write, read, rw, rr, randomrw

bs: 4k

size: 50g

rwmixwrite: 50

num_stack: 1, 3

volume_num: 1

numjobs: 1

direct: 1

contexts:

stack_create: yardstick

flavor:

yardstick_test_ip:

yardstick_test_dir: "samples"

yardstick_testcase: "storage_bottlenecks"

dashboard:

dashboard: "y"

dashboard_ip:

| Bottlenecks POSCA Stress Test Storage (Multistack with Yardstick) | |

| test case name | posca_factor_multistack_storage_parallel |

| description | Stress test regarding storage while using yardstick for multistack as a runner |

| configuration |

|

| test result | Read / Write IOPS, Throughput, latency |

load_manager:

scenarios:

tool: fio

test_times: 10

rw: write, read, rw, rr, randomrw

bs: 4k

size: 50g

rwmixwrite: 50

num_stack: 1, 3

volume_num: 1

numjobs: 1

direct: 1

contexts:

stack_create: yardstick

flavor:

yardstick_test_ip:

yardstick_test_dir: "samples"

yardstick_testcase: "storage_bottlenecks"

dashboard:

dashboard: "y"

dashboard_ip:

| Bottlenecks POSCA Soak Test Throughputs | |

| test case name | posca_factor_soak_throughputs |

| description | Long duration stability tests of data-plane traffic |

| configuration |

|

| test result | THROUGHPUT,THROUGHPUT_UNITS,MEAN_LATENCY,LOCAL_CPU_UTIL, REMOTE_CPU_UTIL,LOCAL_BYTES_SENT,REMOTE_BYTES_RECVD |

load_manager:

scenarios:

tool: netperf

test_duration_hours: 1

vim_pair_ttl: 300

vim_pair_lazy_cre_delay: 2

package_size:

threshhold:

package_loss: 0%

latency: 300

runners:

stack_create: yardstick

flavor:

yardstick_test_dir: "samples"

yardstick_testcase: "netperf_soak"

| Bottlenecks POSCA Soak Test Throughputs | |

| test case name | posca_feature_moon_resources |

| description | Moon authentication capability test for maximum number of authentication operations per tenant |

| configuration |

|

| test result | number of tenants, max number of users |

load_manager:

scenarios:

tool: https request

# info that the cpus and memes have the same number of data.

pdp_name: pdp

policy_name: "MLS Policy example"

model_name: MLS

tenants: 1,5,10,20

subject_number: 10

object_number: 10

timeout: 0.2

runners:

stack_create: yardstick

Debug: False

yardstick_test_dir: "samples"

yardstick_testcase: "moon_resource"

| Bottlenecks POSCA Soak Test Throughputs | |

| test case name | posca_feature_moon_tenants |

| description | Moon authentication capability test for maximum tenants |

| configuration |

|

| test result | Max number of tenants |

load_manager:

scenarios:

tool: https request

# info that the cpus and memes have the same number of data.

pdp_name: pdp

policy_name: "MLS Policy example"

model_name: MLS

subject_number: 20

object_number: 20

timeout: 0.003

initial_tenants: 0

steps_tenants: 1

tolerate_time: 20

SLA: 5

runners:

stack_create: yardstick

Debug: False

yardstick_test_dir: "samples"

yardstick_testcase: "moon_tenant"

| Bottlenecks POSCA Soak Test Throughputs | |

| test case name | posca_feature_nfv_scale_out |

| description | SampleVNF Scale Out Test |

| configuration |

|

| test result | throughputs, latency, loss rate |

load_manager:

scenarios:

number_vnfs: 1, 2, 4

iterations: 10

interval: 35

runners:

stack_create: yardstick

flavor:

yardstick_test_dir: "samples/vnf_samples/nsut/acl"

yardstick_testcase: "tc_heat_rfc2544_ipv4_1rule_1flow_64B_trex_correlated_traffic_scale_out"

| Bottlenecks Kubestone Deployment Capacity Test | |

| test case name | kubestone_deployment_capacity |

| description | Stress test regarding capacity of deployment |

| configuration |

|

| test result | Capcity, Life-Cycle Duration, Available Deployments |

apiVersion: apps/v1

kind: Deployment

namespace: bottlenecks-kubestone

test_type: Horizontal-Scaling

scaling_steps: 10, 50, 100, 200

template: None

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.7.9

ports:

- containerPort: 80

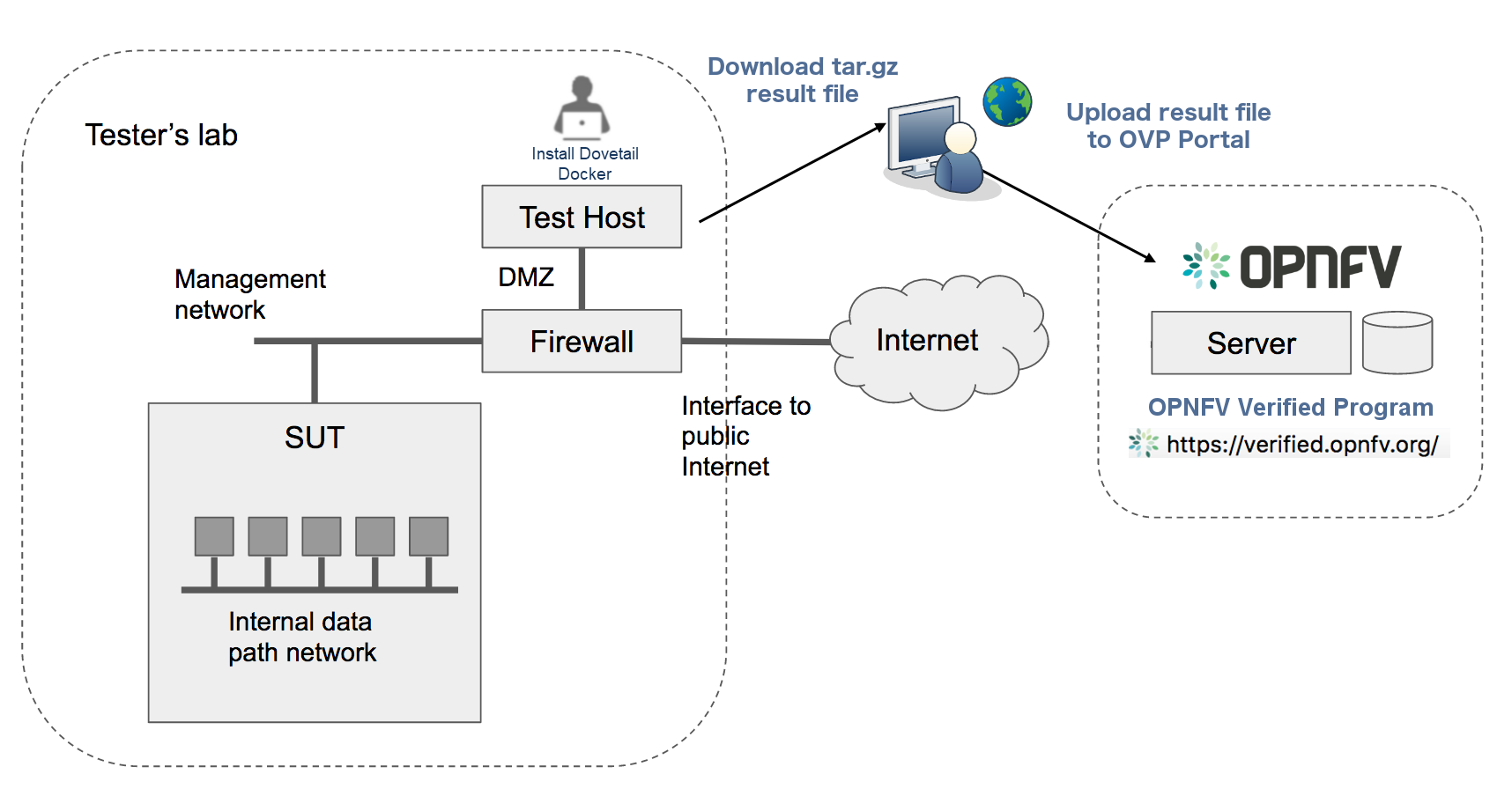

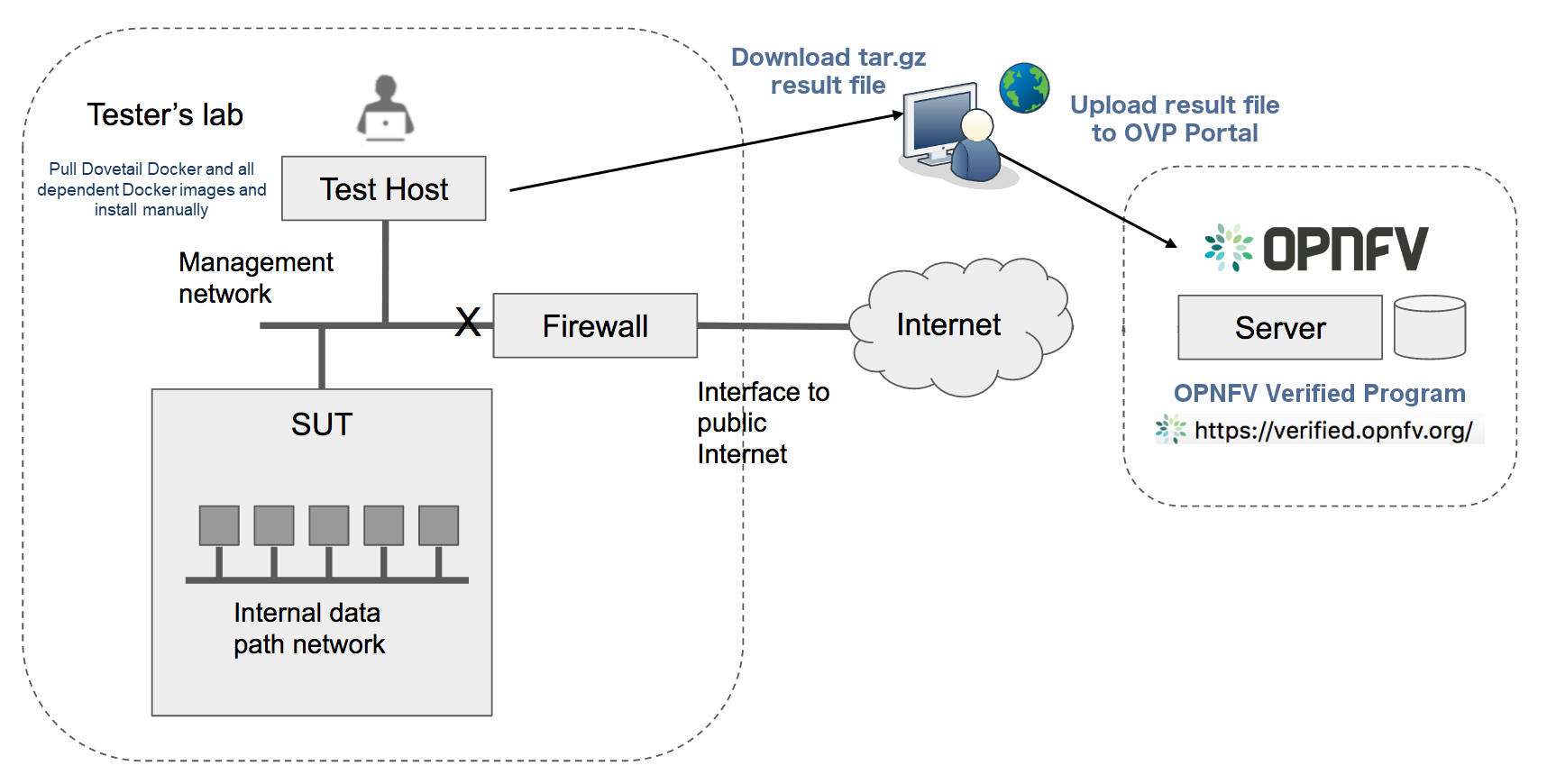

This document provides guidance for prospective participants on how to obtain ‘OPNFV Verified’ status. The OPNFV Verified Program (OVP) is administered by the OPNFV Compliance and Certification (C&C) committee.

For further information about the workflow and general inquiries about the program, please check out the OVP web portal, or contact the C&C committee by email address verified@opnfv.org. This email address should be used for all communication with the OVP.

A participant should start the process by submitting an online participation form. The participation form can found on the OVP web portal or directly at OVP participation form and the following information must be provided:

Once the participation form information is received and in order, an email response will be sent to the primary contact with confirmation and information to proceed. The primary contact specified in the participation form will be entered into OVP web portal back-end by the program administrator and will be permitted to submit results for review on behalf of their organization.

There is no fee at this time for participation in the OVP.

The following documents guide testers to prepare the test environment and run tests:

A unique Test ID is generated by the Dovetail tool for each test run and can only be submitted to the OVP web portal once.

Users/testers other than the primary contact may use the OVP web portal as a resource to upload, evaluate and share results in a private manner. Testers can upload the test results to the OVP web portal. By default, the results are visible only to the tester who uploaded the data.

Testers can self-review the test results through the portal until they are ready to ask for OVP review. They may also add new test results as needed.

Once the tester is satisfied with the test result, the primary contact grants access to the test result for OVP review using a ‘submit for review’ operation via the portal. The test result is identified by the unique Test ID and becomes visible to a review group comprised of OPNFV community members.

When a test result is made visible to the reviewers, the program administrator will ask for volunteers from the review group using the verified@opnfv.org email and CC the primary contact email that a review request has been made. The program administrator will supply the Test ID and owner field (primary contact user ID) to the reviewers to identify the results.

Upon receiving the email request from the program administrator, the review group conducts a peer based review of the test result using reviewer guidelines published per OVP release. Persons employed by the same organization that submitted the test results or by affiliated organizations will not be part of the reviewers.

The primary contact may be asked via email for any missing information or clarification of the test results. The reviewers will make a determination and recommend compliance or non-compliance to the C&C Committee. A positive review requires a minimum of two approvals from two distinct organizations without any negative reviews. The program administrator sends an email to OVP/C&C emails announcing a positive review. A one week limit is given for issues to be raised. If no issue is raised, the C&C Committee approves the result and the program administrator sends an email to OVP/C&C emails stating the result is approved.

Normally, the outcome of the review should be communicated to the primary contact within 10 business days after all required information is in order.

If a test result is denied, an appeal can be made to the C&C Committee for arbitration.

If an application is approved, further information will be communicated to the primary contact on the guidelines of using OVP Program Marks (including OVP logo) and the status of compliance for promotional purposes.

This addendum provides a high-level description of the testing scope and pass/fail criteria used in the OPNFV Verified Program (OVP) for the 2018.09 release. This information is intended as an overview for OVP testers and for the Dovetail Project to help guide test-tool and test-case development for the OVP 2018.09 release. The Dovetail project is responsible for documenting test-case specifications as well as implementing the OVP tool-chain through collaboration with the OPNFV testing community. OVP testing focuses on establishing the ability of the System Under Test (SUT) to perform NFVI and VIM operations and support Service Provider oriented features that ensure manageable, resilient and secure networks.

OPNFV Compliance indicates adherence of an NFV platform to behaviors defined through specific platform capabilities, allowing to prepare, instantiate, operate and remove VNFs running on the NFVI. OVP 2018.09 compliance evaluates the ability of a platform to support Service Provider network capabilities and workloads that are supported in the OPNFV platform as of this release. Compliance test cases are designated as compulsory or optional based on the maturity of OPNFV capabilities as well as industry expectations. Compulsory test cases may for example include NFVI management capabilities whereas tests for certain high-availability features may be deemed as optional.

Test coverage and pass/fail criteria are designed to ensure an acceptable level of compliance but not be so restrictive as to disqualify variations in platform implementations, capabilities and features.

Assumptions about the System Under Test (SUT) include ...

The OVP Governance Guidelines, as approved by the Board of Directors, outlines the key objectives of the OVP as follows:

The guidelines further directs the scope to be constrained to “features, capabilities, components, and interfaces included in an OPNFV release that are generally available in the industry (e.g., through adoption by an upstream community)”, and that compliance verification is evaluated using “functional tests that focus on defined interfaces and/or behaviors without regard to the implementation of the underlying system under test”.

OPNFV provides a broad range of capabilities, including the reference platform itself as well as tools-chains and methodologies for building infrastructures, and deploying and testing the platform. Not all these aspects are in scope for OVP and not all functions and components are tested in the initial versions of OVP. For example, the deployment tools for the SUT and CI/CD toolchain are currently out of scope. Similarly, performance benchmarking related testing is also out of scope or for further study. Newer functional areas such as MANO (outside of APIs in the NFVI and VIM) are still developing and are for future considerations.

In order to meet the above objectives for OVP, we aim to follow a general approach by first identifying the overall requirements for all stake-holders, then analyzing what OPNFV and the upstream communities can effectively test and verify presently to derive an initial working scope for OVP, and to recommend what the community should strive to achieve in future releases.

The overall requirements for OVP can be categorized by the basic cloud capabilities representing common operations needed by basic VNFs, and additional requirements for VNFs that go beyond the common cloud capabilities including functional extensions, operational capabilities and additional carrier grade requirements.

For the basic NFV requirements, we will analyze the required test cases, leverage or improve upon existing test cases in OPNFV projects and upstream projects whenever we can, and bridge the gaps when we must, to meet these basic requirements.

We are not yet ready to include compliance requirements for capabilities such as hardware portability, carrier grade performance, fault management and other operational features, security, MANO and VNF verification. These areas are being studied for consideration in future OVP releases.

In some areas, we will start with a limited level of verification initially, constrained by what community resources are able to support at this time, but still serve a basic need that is not being fulfilled elsewhere. In these areas, we bring significant value to the community we serve by starting a new area of verification, breaking new ground and expanding it in the future.

In other areas, the functions being verified have yet to reach wide adoption but are seen as important requirements in NFV, or features are only needed for specific NFV use cases but an industry consensus about the APIs and behaviors is still deemed beneficial. In such cases, we plan to incorporate the test areas as optional. An optional test area will not have to be run or passed in order to achieve compliance. Optional tests provide an opportunity for vendors to demonstrate compliance with specific OPNFV features beyond the mandatory test scope.

In order to define the scope of the 2018.09 release of the compliance and verification program, this section analyzes NFV-focused platform capabilities with respect to the high-level objectives and the general approach outlined in the previous section. The analysis determines which capabilities are suitable for inclusion in this release of the OVP and which capabilities are to be addressed in future releases.

The intent of these tests is to verify that the SUT has the required capabilities that a basic VNF needs, and these capabilities are implemented in a way that enables this basic VNF to run on any OPNFV compliant deployment.

A basic VNF can be thought of as a single virtual machine that is networked and can perform the simplest network functions, for example, a simple forwarding gateway, or a set of such virtual machines connected only by simple virtual network services. Running such basic VNF leads to a set of common requirements, including:

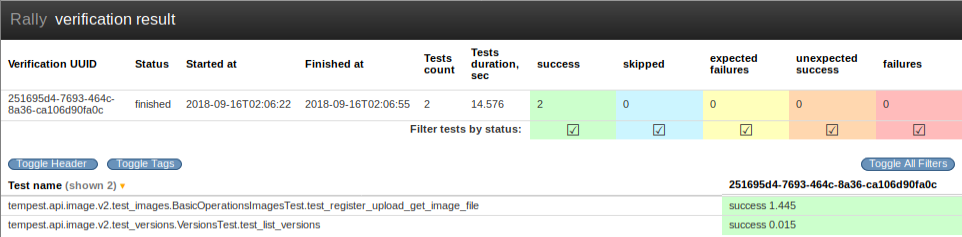

OPNFV mainly supports OpenStack as the VIM up to the 2018.09 release. The VNFs used in the OVP program, and features in scope for the program which are considered to be basic to all VNFs, require commercial OpenStack distributions to support a common basic level of cloud capabilities, and to be compliant to a common specification for these capabilities. This requirement significantly overlaps with OpenStack community’s Interop working group’s goals, but they are not identical. The OVP runs the OpenStack Refstack-Compute test cases to verify compliance to the basic common API requirements of cloud management functions and VNF (as a VM) management for OPNFV. Additional NFV specific requirements are added in network data path validation, packet filtering by security group rules and port security, life cycle runtime events of virtual networks, multiple networks in a topology, validation of VNF’s functional state after common life-cycle events including reboot, pause, suspense, stop/start and cold migration. In addition, the basic requirement also verifies that the SUT can allocate VNF resources based on simple anti-affinity rules.

The combined test cases help to ensure that these basic operations are always supported by a compliant platform and they adhere to a common standard to enable portability across OPNFV compliant platforms.

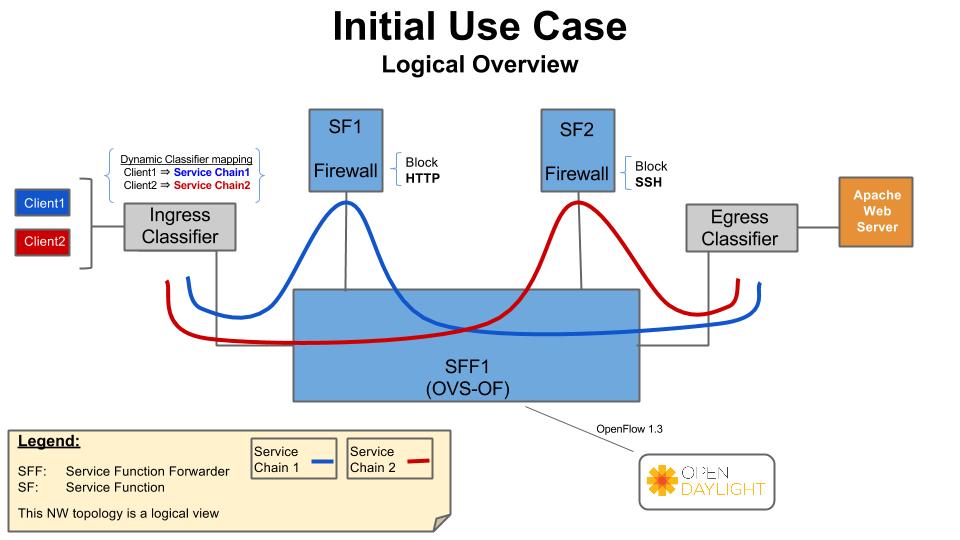

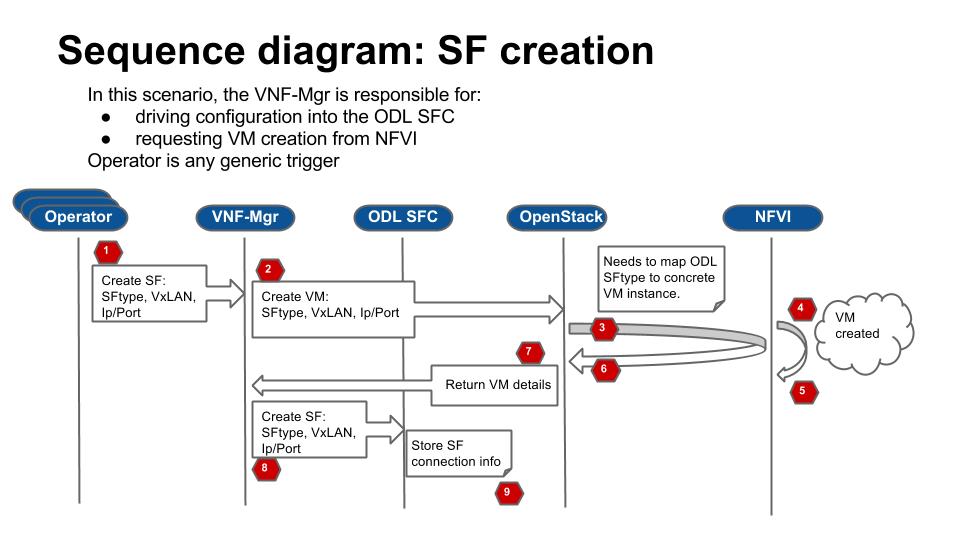

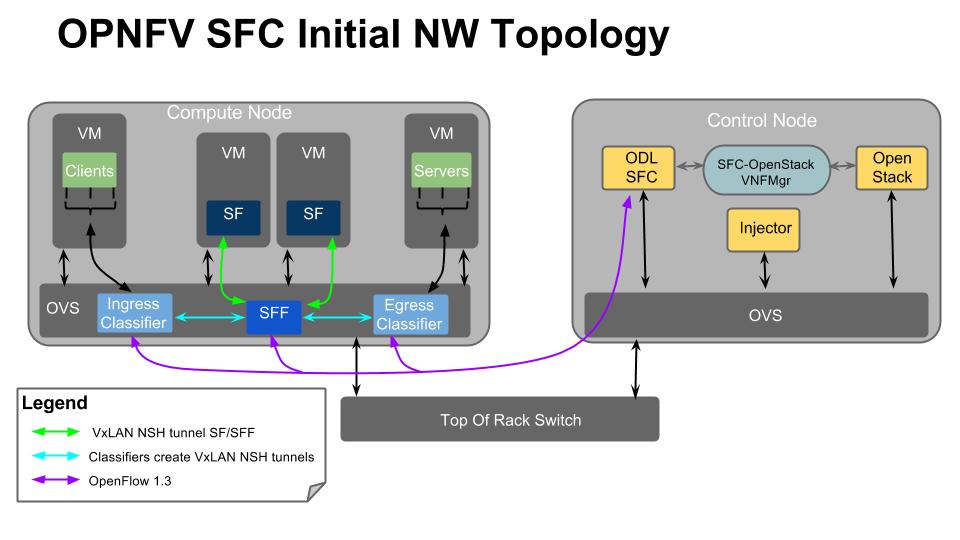

NFV has functional requirements beyond the basic common cloud capabilities, esp. in the networking area. Examples like BGPVPN, IPv6, SFC may be considered additional NFV requirements beyond general purpose cloud computing. These feature requirements expand beyond common OpenStack (or other VIM) requirements. OPNFV OVP will incorporate test cases to verify compliance in these areas as they become mature. Because these extensions may impose new API demands, maturity and industry adoption is a prerequisite for making them a mandatory requirement for OPNFV compliance. At the time of the 2018.09 release, we have promoted tests of the OpenStack IPv6 API from optional to mandatory while keeping BGPVPN as optional test area. Passing optional tests will not be required to pass OPNFV compliance verification.

BGPVPNs are relevant due to the wide adoption of MPLS/BGP based VPNs in wide area networks, which makes it necessary for data centers hosting VNFs to be able to seamlessly interconnect with such networks. SFC is also an important NFV requirement, however its implementation has not yet been accepted or adopted in the upstream at the time of the 2018.09 release.

High availability is a common carrier grade requirement. Availability of a platform involves many aspects of the SUT, for example hardware or lower layer system failures or system overloads, and is also highly dependent on configurations. The current OPNFV high availability verification focuses on OpenStack control service failures and resource overloads, and verifies service continuity when the system encounters such failures or resource overloads, and also verifies the system heals after a failure episode within a reasonable time window. These service HA capabilities are commonly adopted in the industry and should be a mandatory requirement.

The current test cases in HA cover the basic area of failure and resource overload conditions for a cloud platform’s service availability, including all of the basic cloud capability services, and basic compute and storage loads, so it is a meaningful first step for OVP. We expect additional high availability scenarios be extended in future releases.

Resiliency testing involves stressing the SUT and verifying its ability to absorb stress conditions and still provide an acceptable level of service. Resiliency is an important requirement for end-users.

The 2018.09 release of OVP includes a load test which spins up a number of VMs pairs in parallel to assert that the system under test can process the workload spike in a stable and deterministic fashion.

Security is among the top priorities as a carrier grade requirement by the end-users. Some of the basic common functions, including virtual network isolation, security groups, port security and role based access control are already covered as part of the basic cloud capabilities that are verified in OVP. These test cases however do not yet cover the basic required security capabilities expected of an end-user deployment. It is an area that we should address in the near future, to define a common set of requirements and develop test cases for verifying those requirements.

The 2018.09 release includes new test cases which verify that the role-based access control (RBAC) functionality of the VIM is behaving as expected.

Another common requirement is security vulnerability scanning. While the OPNFV security project integrated tools for security vulnerability scanning, this has not been fully analyzed or exercised in 2018.09 release. This area needs further work to identify the required level of security for the purpose of OPNFV in order to be integrated into the OVP. End-user inputs on specific requirements in security is needed.

Service assurance (SA) is a broad area of concern for reliability of the NFVI/VIM and VNFs, and depends upon multiple subsystems of an NFV platform for essential information and control mechanisms. These subsystems include telemetry, fault management (e.g. alarms), performance management, audits, and control mechanisms such as security and configuration policies.

The current 2018.09 release implements some enabling capabilities in NFVI/VIM such as telemetry, policy, and fault management. However, the specification of expected system components, behavior and the test cases to verify them have not yet been adequately developed. We will therefore not be testing this area at this time but defer to future study.

Use-case test cases exercise multiple functional capabilities of a platform in order to realize a larger end-to-end scenario. Such end-to-end use cases do not necessarily add new API requirements to the SUT per se, but exercise aspects of the SUT’s functional capabilities in more complex ways. For instance, they allow for verifying the complex interactions among multiple VNFs and between VNFs and the cloud platform in a more realistic fashion. End-users consider use-case-level testing as a significant tool in verifying OPNFV compliance because it validates design patterns and support for the types of NFVI features that users care about.

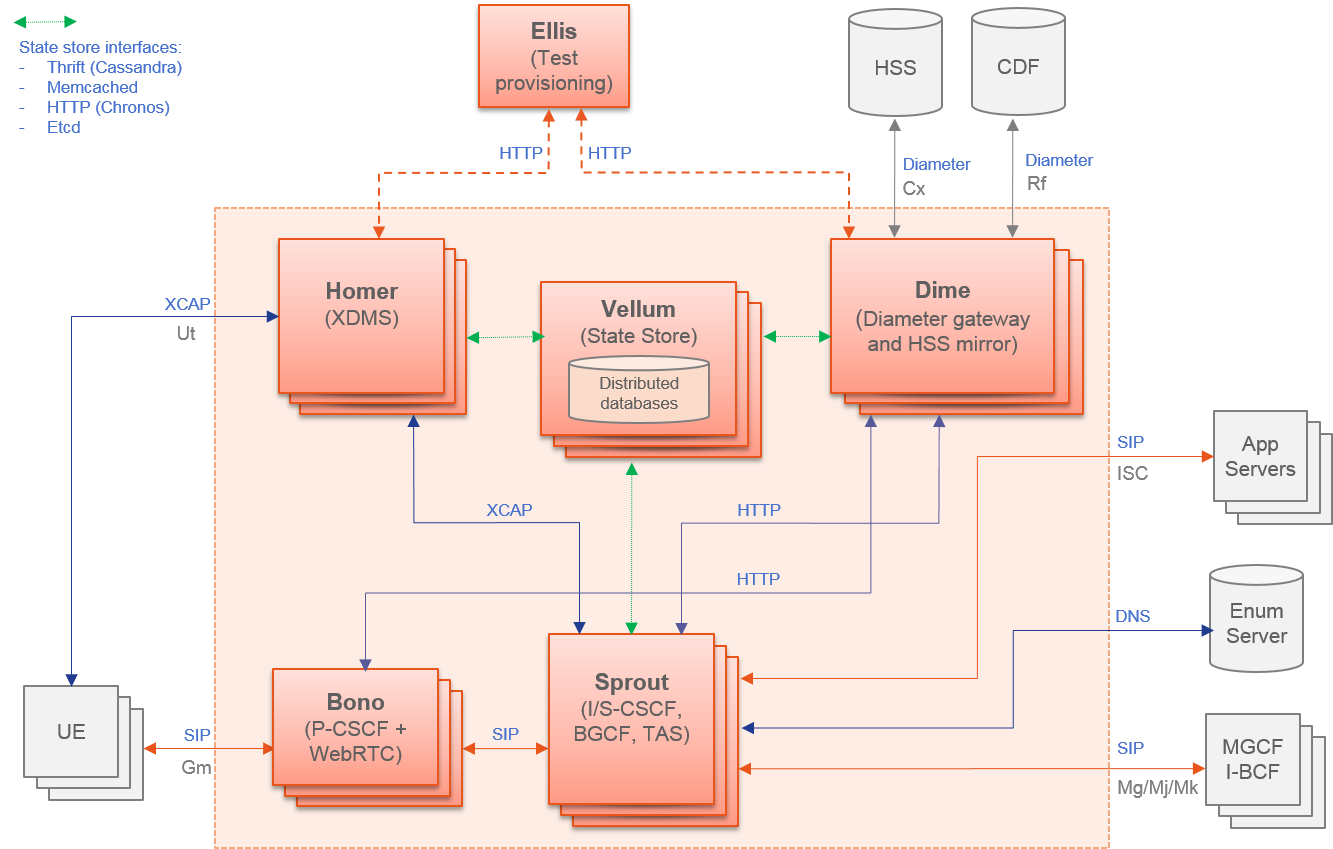

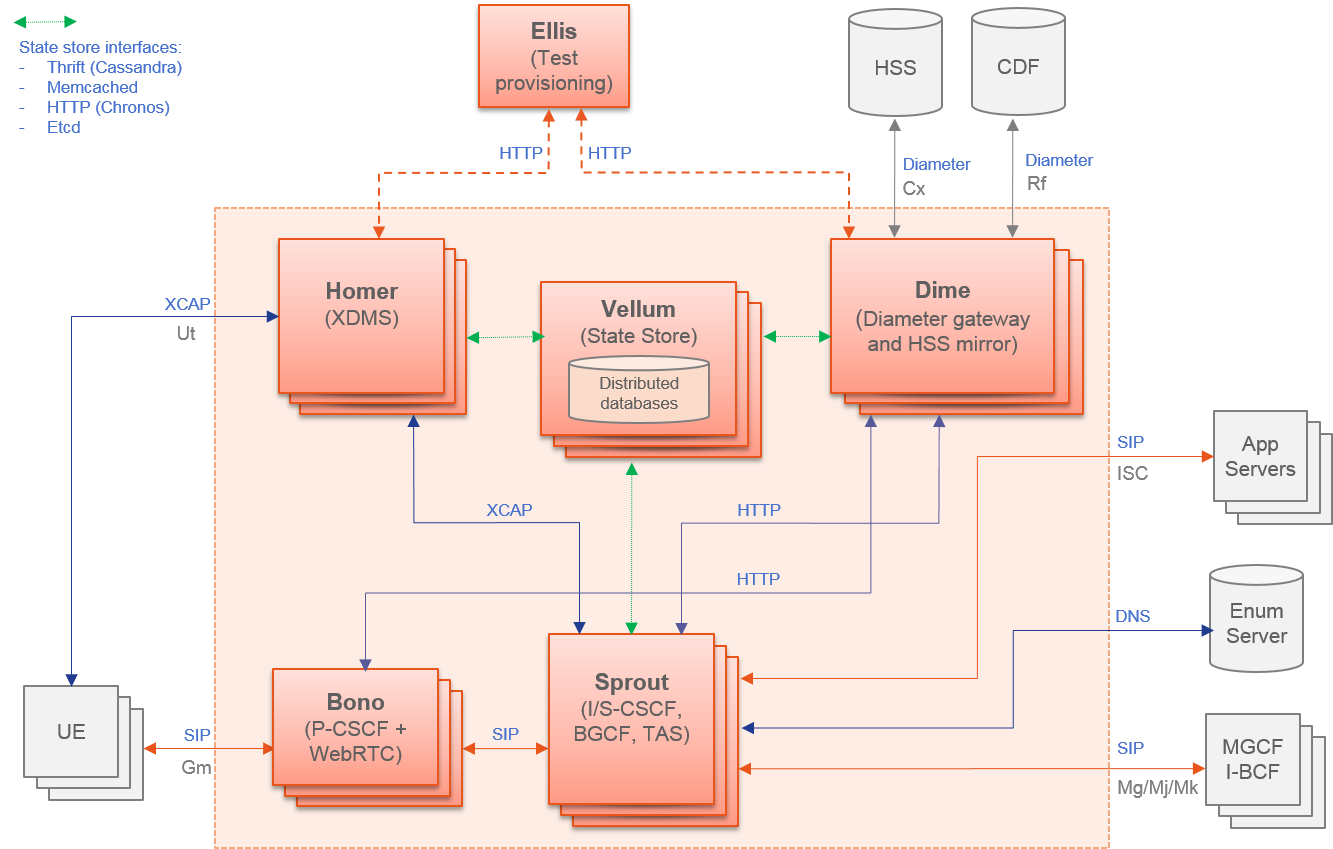

There are a lot of projects in OPNFV developing use cases and sample VNFs. The 2018.09 release of OVP features two such use-case tests, spawning and verifying a vIMS and a vEPC, correspondingly.

In addition to the capabilities analyzed above, there are further system aspects which are of importance for the OVP. These comprise operational and management aspects such as platform in-place upgrades and platform operational insights such as telemetry and logging. Further aspects include API backward compatibility / micro-versioning, workload migration, multi-site federation and interoperability with workload automation platforms, e.g. ONAP. Finally, efficiency aspects such as the hardware and energy footprint of the platform are worth considering in the OVP.

OPNFV is addressing these items on different levels of details in different projects. However, the contributions developed in these projects are not yet considered widely available in commercial systems in order to include them in the OVP. Hence, these aspects are left for inclusion in future releases of the OVP.

Summarizing the results of the analysis above, the scope of the 2018.09 release of OVP is as follows:

* The OPNFV OVP utilizes the same set of test cases as the OpenStack interoperability program OpenStack Powered Compute. Passing the OPNFV OVP does not imply that the SUT is certified according to the OpenStack Powered Compute program. OpenStack Powered Compute is a trademark of the OpenStack foundation and the corresponding certification label can only be awarded by the OpenStack foundation.

Note: The SUT is limited to NFVI and VIM functions. While testing MANO component capabilities is out of scope, certain APIs exposed towards MANO are used by the current OPNFV compliance testing suite. MANO and other operational elements may be part of the test infrastructure; for example used for workload deployment and provisioning.

Based on the previous analysis, the following items are outside the scope of the 2018.09 release of OVP but are being considered for inclusion in future releases:

This section provides guidance on compliance criteria for each test area. The criteria described here are high-level, detailed pass/fail metrics are documented in Dovetail test specifications.

Exceptions to this rule may be legitimate, e.g. due to imperfect test tools or reasonable circumstances that we can not foresee. These exceptions must be documented and accepted by the reviewers.

Applicants who choose to run the optional test cases can include the results of the optional test cases to highlight the additional compliance.

Vendors of commercial NFVI products may have extended the Nova API to support proprietary add-on features. These additions can cause Nova Tempest API tests to fail due to unexpected data in API responses. In order to resolve this transparently in the context of OVP, a temporary exemption process has been created. More information on the exemption can be found in section Disabling Strict API Validation in Tempest.

This document provides detailed guidance for reviewers on how to handle the result review process.

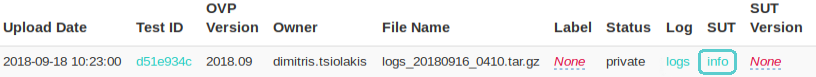

The OPNFV Verified program (OVP) provides the ability for users to upload test results in OVP portal and request from OVP community to review them. After the user submit for review the test results Status is changed from ‘private’ to ‘review’ (as shown in figure 2).

OVP administrator will ask for review volunteers using the verified@opnfv.org email alias. The incoming results for review will be identified by the administrator with particular Test ID and Owner values.

Volunteers that will accept the review request can access the test results by login to the OVP portal and the click on the My Results tab in top-level navigation bar.

Figure 1

The corresponding OVP portal result will have a status of ‘review’.

Figure 2

Reviewers must follow the checklist below to ensure review consistency for the OPNFV Verified Program (OVP) 2018.09 (Fraser) release at a minimum.

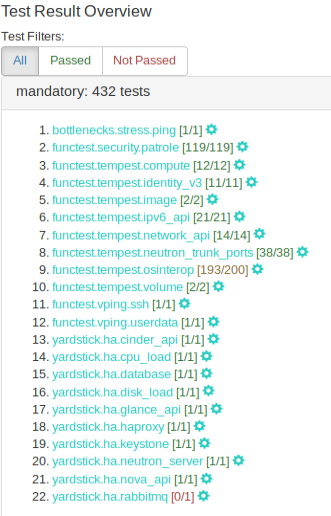

Test results can be displayed by clicking on the hyperlink under the ‘Test ID’ column. User should validate that results for all mandatory test areas are included in the overall test suite. The required mandatory test cases are:

Note, that the ‘Test ID’ column in this view condenses the UUID used for ‘Test ID’ to eight characters even though the ‘Test ID’ is a longer UUID in the back-end.

Figure 3

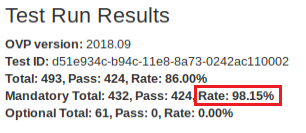

All mandatory test-cases have to run successfully. The below diagram of the ‘Test Run Results’ is one method and shows that 98.15% of the mandatory test-cases have passed. This value must not be lower than 100%.

Figure 4

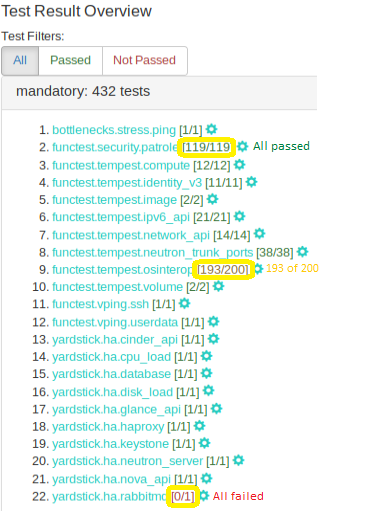

Failed test cases can also be easy identified by the color of pass/total number. :

Figure 5

Each log file of the mandatory test cases have to be verified for content.

Log files can be displayed by clicking on the setup icon to the right of the results, as shown in figure below.

Figure 6

Note, all log files can be found at results/ directory as shown at the following table.

| Mandatory Test Case | Location |

| bottlenecks | results/stress_logs/ |

| functest.vping | results/vping_logs/ |

| functest.tempest | results/tempest_logs/ |

| functest.security | results/security_logs/ |

| yardstick | results/ha_logs/ |

The bottlenecks log must contain the ‘SUCCESS’ result as shown in following example:

2018-08-22 14:11:21,815 [INFO] yardstick.benchmark.core.task task.py:127 Testcase: “ping_bottlenecks” SUCCESS!!!

Functest logs opens an html page that lists all test cases as shown in figure 7. All test cases must have run successfuly.

Figure 7

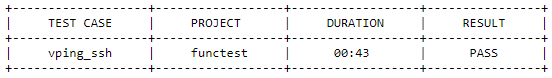

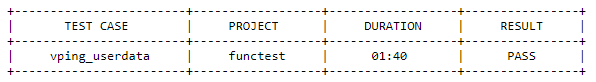

For the vping test area log file (functest.log). The two entries displayed in the tables below must be present in this log file.

functest.vping_userdata

Figure 8

functest.vping_ssh

Figure 9

The yardstick log must contain the ‘SUCCESS’ result for each of the test-cases within this test area. This can be verified by searching the log for the keyword ‘SUCCESS’.

An example of a FAILED and a SUCCESS test case are listed below:

2018-08-28 10:25:09,946 [ERROR] yardstick.benchmark.scenarios.availability.monitor.monitor_multi monitor_multi.py:78 SLA failure: 14.015082 > 5.000000

2018-08-28 10:23:41,907 [INFO] yardstick.benchmark.core.task task.py:127 Testcase: “opnfv_yardstick_tc052” SUCCESS!!!

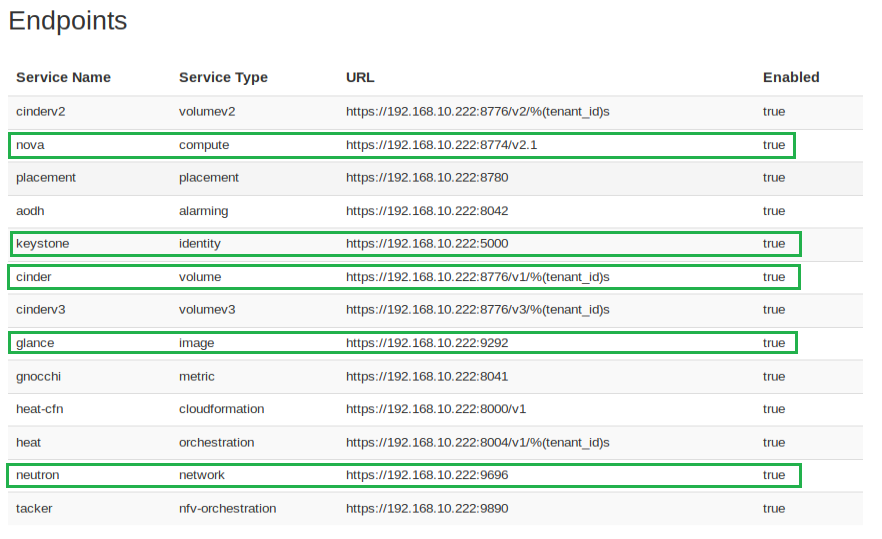

SUT information must be present in the results to validate that all required endpoint services and at least two controllers were present during test execution. For the results shown below, click the ‘info‘ hyperlink in the SUT column to navigate to the SUT information page.

Figure 10

In the ‘Endpoints‘ listing shown below for the SUT VIM component, ensure that services are present for identify, compute, image, volume and network at a minimum by inspecting the ‘Service Type‘ column.

Figure 11

Inspect the ‘Hosts‘ listing found below the Endpoints secion of the SUT info page and ensure at least two hosts are present, as two controllers are required the for the mandatory HA test-cases.

This document provides a general guide to hardware system prerequisites and expectations for running OPNFV OVP testing. For detailed guide of preparing software tools and configurations, and conducting the test, please refer to the User Guide :ref:dovetail-testing_user_guide.

The OVP test tools expect that the hardware of the System Under Test (SUT) is Pharos compliant Pharos specification

The Pharos specification itself is a general guideline, rather than a set of specific hard requirements at this time, developed by the OPNFV community. For the purpose of helping OVP testers, we summarize the main aspects of hardware to consider in preparation for OVP testing.

As described by the OVP Testing User Guide, the hardware systems involved in OVP testing includes a Test Node, a System Under Test (SUT) system, and network connectivity between them.

The Test Node can be a bare metal machine or a virtual machine that can support Docker container environment. If it is a bare metal machine, it needs to be a x86 based at this time. Detailed information of how to configure and prepare the Test Node can be found in the User Guide.

The System Under Test (SUT) system is expected to consist of a set of general purpose servers, storage devices or systems, and networking infrastructure connecting them together. The set of servers are expected to be of the same architecture, either x86-64 or ARM-64. Mixing different architectures in the same SUT is not supported.

A minimum of 5 servers, 3 configured for controllers and 2 or more configured for compute resource are expected. However this is not a hard requirement at this phase. The OVP 1.0 mandatory test cases only require one compute server. At lease two compute servers are required to pass some of the optional test cases in the current OVP release. OVP control service high availability tests expect two or more control nodes to pass, depending on the HA mechanism implemented by the SUT.

The SUT is also expected to include components for persistent storage. The OVP testing does not expect or impose significant storage size or performance requirements.

The SUT is expected to be connected with high performance networks. These networks are expected in the SUT:

Additional networks, such as Light Out Management or storage networks, may be beneficial and found in the SUT, but they are not a requirement for OVP testing.

The OPNFV OVP provides a series or test areas aimed to evaluate the operation of an NFV system in accordance with carrier networking needs. Each test area contains a number of associated test cases which are described in detail in the associated test specification.

All tests in the OVP are required to fulfill a specific set of criteria in order that the OVP is able to provide a fair assessment of the system under test. Test requirements are described in the :ref:dovetail-test_case_requirements document.

All tests areas addressed in the OVP are covered in the following test specification documents.

The HA test area evaluates the ability of the System Under Test to support service continuity and recovery from component failures on part of OpenStack controller services(“nova-api”, “neutron-server”, “keystone”, “glance-api”, “cinder-api”) and on “load balancer” service.

The tests in this test area will emulate component failures by killing the processes of above target services, stressing the CPU load or blocking disk I/O on the selected controller node, and then check if the impacted services are still available and the killed processes are recovered on the selected controller node within a given time interval.